The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

AI Fairness 101 - Real-World Incidents

Part 4 of 4

Table of Contents

- 🎥 Explained: The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️**

- 🧩 1. What Happened: A Tale of Two Truths

- 🔗 2. Impact: When Statistics Become Sentences

- ☠️ 3. Lifecycle Failure: The Poisoned Well

- ⚔️ 4. Bias Types: A Clash of Definitions

- 🌍 5. Global South Lens: Exporting Inequality

- 🧠 6. Bigger Picture: Can We Code Our Way to Justice?

- ❓ Curiosity Provocations

- 🧵 Final Reflection: The Human System Behind the Machine

- 📚 References

- 📥 AI Fairness 101 — Real-World Incidents: The COMPAS Algorithm Scandal Case Deck (PDF)

- 🔎 Explore the AI Fairness 101 Series

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

- Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

🎥 Explained: The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️**

🧭 A Deep Dive into the COMPAS Fairness Dilemma

⚖️ The Algorithm’s Forked Tongue

🤖 When algorithms speak with two voices, justice becomes a matter of mathematics.

🧩 1. What Happened: A Tale of Two Truths

For decades, the U.S. criminal justice system has moved toward 📊 evidence-based sentencing.

The goal: reduce inconsistent judicial discretion.

The tool: actuarial risk models.

The promise: ⚙️ objectivity and scientific rigor.

That promise fractured in 2016 with controversy surrounding COMPAS — the Correctional Offender Management Profiling for Alternative Sanctions system.

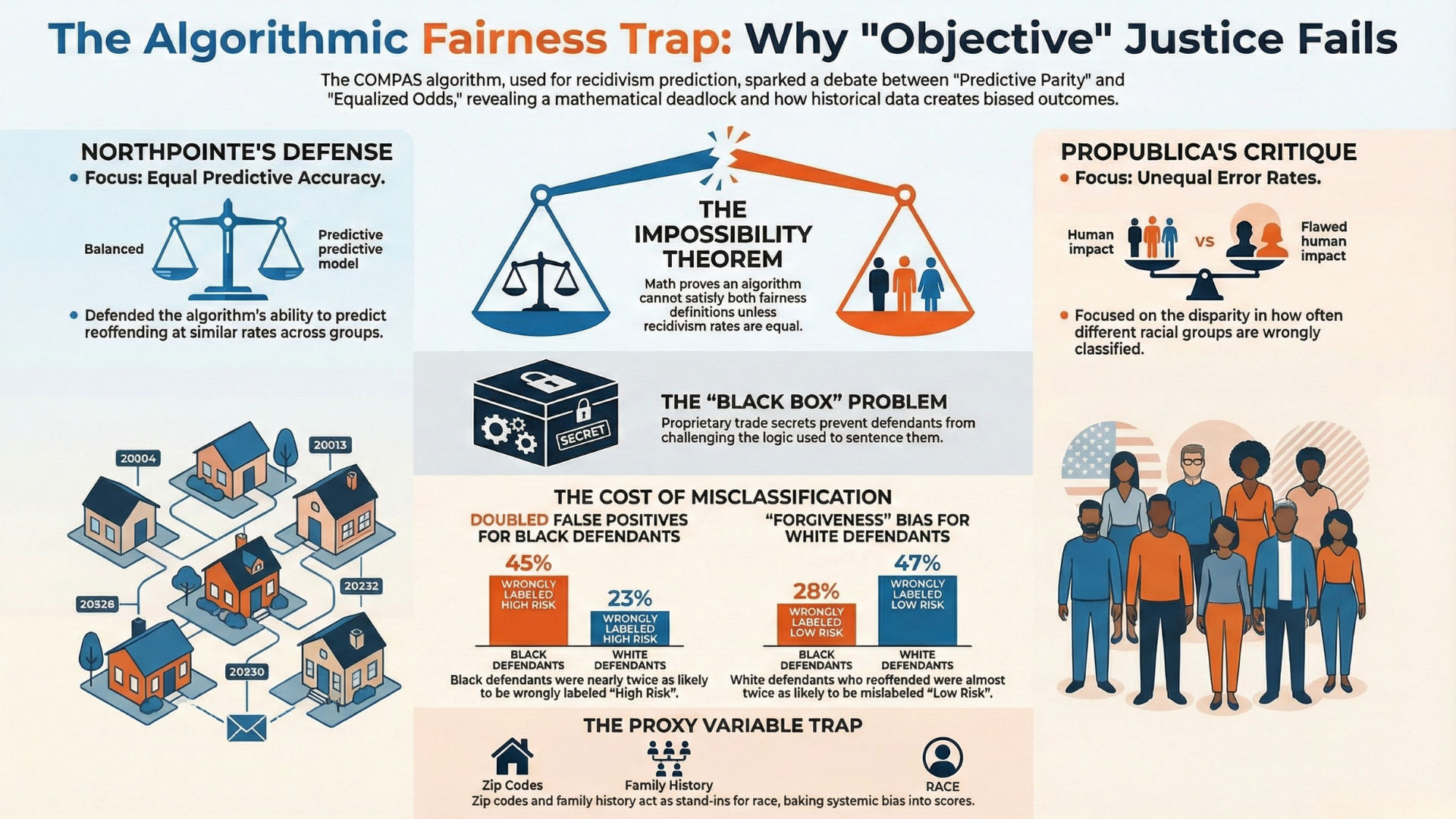

🥊 The Core Conflict

Two institutions. Two truths.

- 📰 ProPublica → investigative nonprofit

- 🏢 Northpointe (now equivant) → COMPAS developer

ProPublica analyzed ~10,000 defendants in Broward County, Florida.

Later benchmarks using AIF360 standardized the dataset to 6,167 rows (5,723 for Black and White defendants).

📌 Controversy Cheat Sheet

🚨 The Accusation (ProPublica)

The algorithm is racially biased.

- Black defendants who did not reoffend were nearly 2× more likely to be labeled High Risk.

- White defendants who did reoffend were more likely to be labeled Low Risk.

🛡️ The Defense (Northpointe)

The algorithm is fair.

- It maintains predictive parity.

- A risk score has the same statistical meaning across races.

🧮 The Mathematical Deadlock

At the heart lies the Fairness Impossibility Theorem:

❗ No model can satisfy both equal error rates and predictive parity unless base rates are equal or accuracy is perfect.

Because arrest and recidivism rates differ across groups—driven by systemic factors like over-policing—both sides are technically correct.

⚠️ The result: a mathematical conflict with human consequences.

🔗 2. Impact: When Statistics Become Sentences

Choosing a fairness metric is not academic.

It defines who loses freedom.

When COMPAS labels someone High Risk, it influences:

- 💰 Higher bond

- 🚫 Denied parole

- ⛓️ Longer incarceration

📉 The Human Cost of Misclassification

| Metric | Black Defendants | White Defendants |

|---|---|---|

| ❌ False Positive (High Risk, no reoffense) | 45% | 23% |

| ⚠️ False Negative (Low Risk, reoffended) | 28% | 47% |

🧠 Why This Matters

- 45% false positives → nearly half of law-abiding Black defendants lost liberty.

- 47% false negatives → the system extended more benefit of the doubt to White defendants.

👉 To understand why, we must examine the data feeding the machine.

☠️ 3. Lifecycle Failure: The Poisoned Well

COMPAS did not fail at prediction.

It failed at data design.

If training data reflects historical oppression, the algorithm becomes a ⚡ high-speed engine for reinforcing it.

🧬 The Proxy Variable Problem

COMPAS avoids race directly but uses proxies:

- 📍 Zip Codes → segregated, heavily policed neighborhoods

- 👥 Family/Friend Victim History → systemic inequality exposure

- 🚨 Gang Affiliation → subjective urban profiling

- 🏚️ Socialization History → housing instability, family disruption

These encode structural inequality while appearing neutral.

⚖️ Due Process vs Trade Secrets

In State v. Loomis, the Wisconsin Supreme Court allowed COMPAS use with disclaimers.

🧾 The problem:

Defendants cannot challenge a proprietary algorithm used to sentence them.

Trade secrets > due process.

🔧 Attempts at Reform

equivant’s COMPAS-R Core introduced:

- 🧼 Neutral language revisions

- ❌ Removal of ambiguous responses

- 🧪 Experimental bias-testing questions

These improve optics, not structural fairness.

⚔️ 4. Bias Types: A Clash of Definitions

Fairness is not one thing.

🎯 Three Competing Definitions

1️⃣ Equalized Odds

Equal error rates across groups.

2️⃣ Predictive Parity

Risk scores mean the same across groups.

3️⃣ Historical Bias

Ground truth reflects systemic inequality.

⚖️ The Trade-off

- 🧑🤝🧑 Group fairness → correct systemic disadvantage

- 👤 Individual fairness → treat similar individuals consistently

Improving one often worsens the other.

👉 Justice becomes a statistical choice.

🌍 5. Global South Lens: Exporting Inequality

COMPAS is not just a U.S. story.

Western models are exported as “modernization tools” to the Global South.

But variables like:

- 🏠 Residential stability

- 💼 Vocational history

are not neutral where:

- Informal economies dominate

- Conflict causes displacement

- Colonial policies shaped land access

⚠️ Exporting such models risks technological solutionism — fixing justice with code while ignoring poisoned data.

🧠 6. Bigger Picture: Can We Code Our Way to Justice?

Technical fixes exist. None solve the root problem.

🛠️ Mitigation Strategies

| Strategy | Effectiveness | Limitation |

|---|---|---|

| ⚖️ Reweighing | Improves fairness metrics | Cannot fix biased data |

| 🧮 Prejudice Remover | Enforces independence from race | ~38% accuracy |

| 🔄 Calibrated Equalized Odds | Balances errors | Misses real recidivism |

🧭 Radical Proposal: Affirmative Algorithms

Use race as a corrective factor to counter systemic bias.

This acknowledges reality — and raises democratic questions.

❓ Curiosity Provocations

- 🎲 If the model is barely better than a coin flip, why use it for prison time?

- 🔒 Should trade secrets override a defendant’s right to examine evidence?

- 🗳️ If fairness definitions conflict, who decides which one governs justice?

🧵 Final Reflection: The Human System Behind the Machine

Algorithms reflect the systems that produce their data:

- 🚔 Over-policing

- 📉 Structural inequality

- 🏛️ Historical discrimination

Technical fixes treat symptoms.

👤 Human accountability must remain the final check.

⚠️ A black box cannot be the final word on human freedom.

📚 References

🔎 Investigative Journalism & Public Analyses

-

Angwin, J., Larson, J., Mattu, S., & Kirchner, L. (2016).

Machine Bias: There’s Software Used Across the Country to Predict Future Criminals — And It’s Biased Against Blacks.

ProPublica. -

Chawla, M. (2022).

COMPAS Case Study: Investigating Algorithmic Fairness of Predictive Policing.

Medium. -

Deb, E. (2023).

COMPAS — an AI Tool Sending or Keeping People in Jail.

Medium. -

Murray, K. (2025).

What the Legal Drama For the People Teaches Us About AI and Legal Ethics. -

Wikipedia. (2025).

COMPAS (software).

⚖️ Legal, Policy & Governance Sources

-

State v. Loomis. (2016). 881 N.W.2d 749 (Wis. 2016).

-

U.S. Congress. (2025).

S.2164 — Algorithmic Accountability Act of 2025. 119th Congress. -

New Jersey Judiciary. (2025).

Criminal Justice Reform — Myth v. Fact. -

Tait, E. J., Linas, J. M., Bergstrom, R., Kukkonen, C. A., & Schulman, E. R. (2019).

Proposed Algorithmic Accountability Act Targets Bias in Artificial Intelligence.

Jones Day. -

Stevenson, M. T., & Doleac, J. L. (2018).

Algorithmic Risk Assessment in the Hands of Humans.

American Constitution Society. -

Stensrud, A. (2025).

The COMPAS Case’s Impact on the EU’s AI Act.

Lov&Data.

🧮 Fairness Theory & Technical Research

-

Acharya, A., Caravela, D., Kim, E., Kornberg, E., & Nesmith, E. (2022).

Does the COMPAS Needle Always Point Towards Equity? Finding Fairness in the COMPAS Risk Assessment Algorithm: A Case Study.

CAUSEweb. -

Ejike, U. (2026).

The Fairness–Accuracy Frontier: Impossibility Theorems and Optimal Tradeoffs in Algorithmic Decision-Making.

WorldQuant University. -

Hellman, D. (2020).

Measuring Algorithmic Fairness.

Virginia Law Review, 106(4). -

Hsu, B., Mazumder, R., Nandy, P., & Basu, K. (2022).

Pushing the Limits of Fairness Impossibility: Who’s the Fairest of Them All?

NeurIPS. -

Schmid, F. (2022).

Understanding the Importance of Algorithmic Fairness.

Gen Re. -

Kartha, N., & Young, W. D. (n.d.).

An Overview of Algorithmic Bias in Artificial Intelligence.

University of Texas at Austin.

🧠 Ethics, Due Process & Sociotechnical Perspectives

-

Humerick, J. (2020).

Reprogramming Fairness: Affirmative Action in Algorithmic Criminal Sentencing.

Columbia Human Rights Law Review. -

Israni, E. (2017).

Algorithmic Due Process: Mistaken Accountability and Attribution in State v. Loomis.

JOLT Digest. -

Rev. (n.d.).

AI Sentencing Ethics: Balancing Justice and Innovation. -

Vaccaro, M. A. (2019).

Algorithms in Human Decision-Making: A Case Study With the COMPAS Risk Assessment Software.

Harvard University DASH Repository.

🏢 Vendor & System Documentation

-

equivant Supervision. (2024).

Debunking Misconceptions About the COMPAS Core Instrument: What You Need to Know. -

equivant Supervision. (2023).

How Do the Scales in the COMPAS-R Core Differ From Those in the Standard COMPAS Core? -

equivant Supervision. (2023).

Why Was the COMPAS-R Core Created and How Does It Differ From the Standard COMPAS Core?

🧾 Critical Responses & Methodological Debates

-

Flores, A. W., Lowenkamp, C. T., & Bechtel, K. (2016).

False Positives, False Negatives, and False Analyses: A Rejoinder to “Machine Bias.”

Federal Probation Journal, 80(2). -

Fossett, J. (2020).

Response to “How We Analyzed the COMPAS Recidivism Algorithm” (Larson et al.).

📥 AI Fairness 101 — Real-World Incidents: The COMPAS Algorithm Scandal Case Deck (PDF)

👉 Download the The COMPAS Algorithm Scandal Case Deck (PDF)

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 — Real-World Incidents learning track.

Stay tuned — new posts every week!

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A deep dive into Michigan’s MiDAS unemployment fraud algorithm — and how design-phase failures, automation bias, and the removal of human oversight turned efficiency into injustice.

Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.