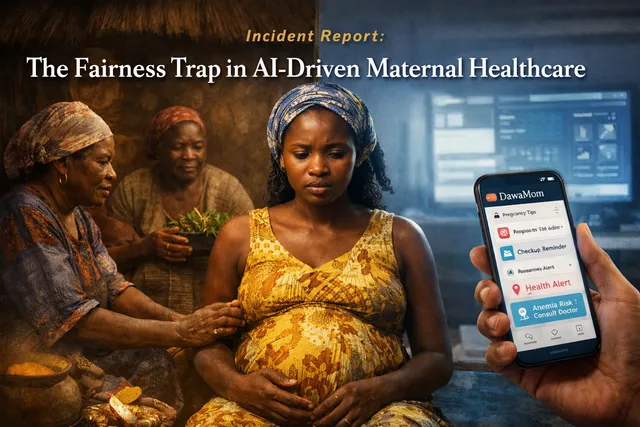

The Fairness Trap in AI-Driven Maternal Healthcare: Zambia's DawaMom Case

How Zambia's DawaMom case reveals the fairness risks of AI-driven maternal healthcare when open-source data, biomedical defaults, and infrastructure gaps fail to reflect local realities.