The Ghost in the Machine: Uganda's Ndaga Muntu and the High Cost of Digital Identity

How Uganda's Ndaga Muntu national ID system exposes the human cost of digital identity when legal status, public services, finance, education, and land rights depend on fragile registry infrastructure.

AI Fairness 101 - Real-World Incidents

Part 12 of 13

Table of Contents

- 🎥 Explained: Uganda’s Ndaga Muntu and the High Cost of Digital Identity

- 🧵 AI Fairness 101 — Real-World Incident #12: Uganda’s Ndaga Muntu

- 🔍 The Ghost in the Machine: Uganda’s Ndaga Muntu and the High Cost of Digital Identity

- 1. What Happened: The Legal vs. Digital Reality

- 2. The Human Impact: Living as a “Ghost”

- 3. Lifecycle Failure: From Data Entry to Printing Press

- 4. Bias Types: Representation and Structural Exclusion

- 5. The Global South Lens: The Infrastructure Gap

- 6. The Bigger Picture: Why the World Should Care

- References

- Related in this cluster

🎥 Explained: Uganda’s Ndaga Muntu and the High Cost of Digital Identity

🧵 AI Fairness 101 — Real-World Incident #12: Uganda’s Ndaga Muntu

A case study in how digital identity can make people visible to databases while leaving them invisible to rights, services, and protection.

🔍 The Ghost in the Machine: Uganda’s Ndaga Muntu and the High Cost of Digital Identity

- 🛠️ System used: Uganda's Ndaga Muntu national identity system and the National Identification Register managed through NIRA

- 👥 Most affected group: poor and elderly citizens, students, informal workers, people with data errors, and communities such as the Maragoli

- ⚠️ Core failure: life-critical services were tied to a registry that is legally mandatory, administratively brittle, and unevenly accessible

- 📉 Outcome: people became "registered but unrecognized," blocked from services, credit, education, voting workarounds, and land rights

⚠️ Key Takeaway

Digital identity systems do not only fail when algorithms misclassify people. They also fail when registries, laws, cards, corrections, and service rules combine to make real citizens disappear.

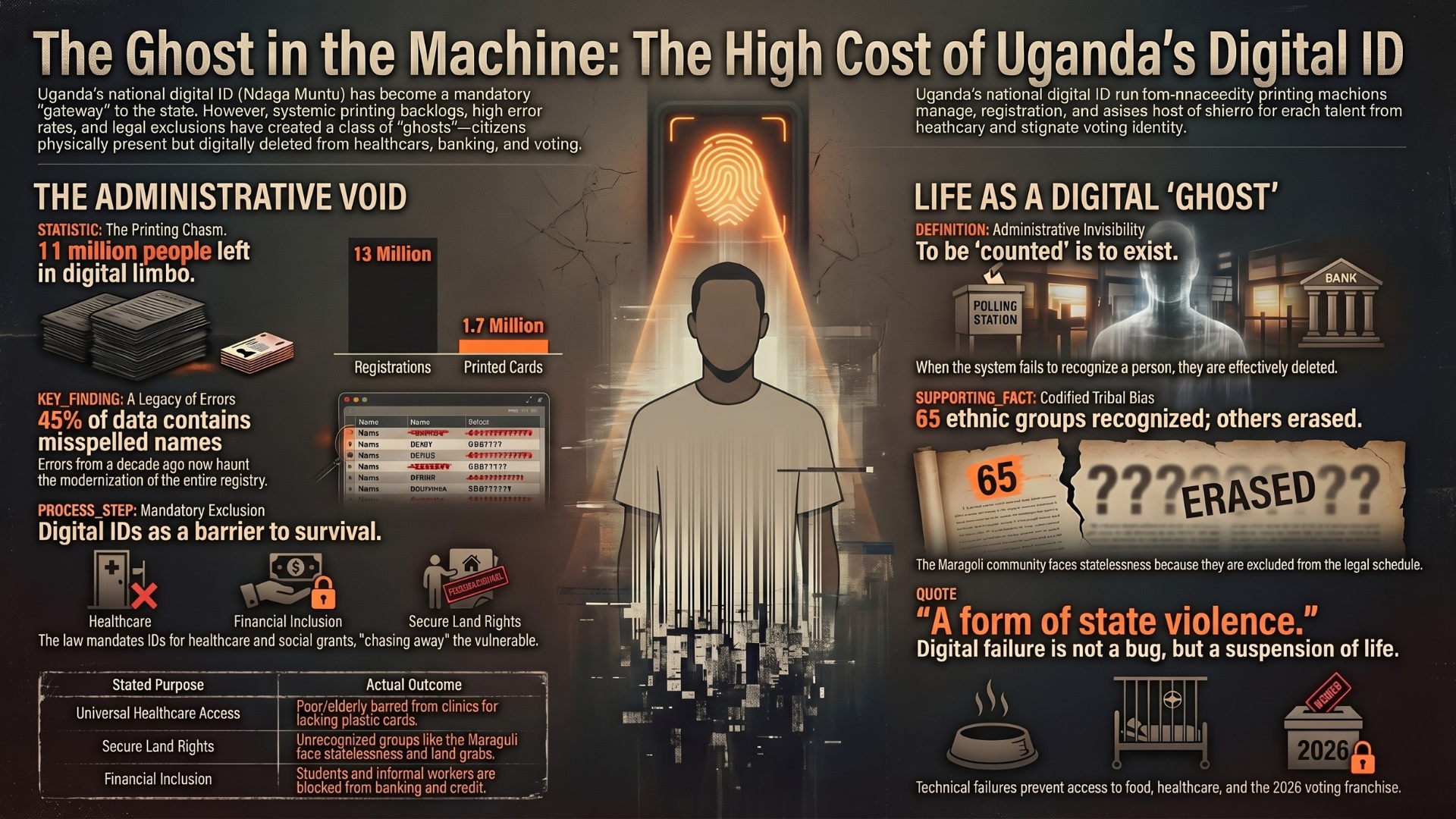

In modern Uganda, citizenship is increasingly mediated by a database. The Ndaga Muntu, Uganda’s national ID system, has been positioned as the gateway to state recognition and access to services. Yet the system has also produced a stark fairness problem: when the registry cannot see someone correctly, that person becomes a kind of administrative ghost.

This case is not only about biometric technology. It is about the legal and infrastructural power of a registry. If access to healthcare, social protection, education, financial services, land claims, and voting processes depends on a fragile identity layer, then identity errors become rights violations.

1. What Happened: The Legal vs. Digital Reality

On June 10, 2025, the Ugandan High Court issued a ruling in HCT-00-CV-MC-0066-2022 that exposed a central paradox in Uganda’s digital identity governance.

The Initiative for Social and Economic Rights (ISER) argued that Ndaga Muntu functions as a biometric backbone that excludes vulnerable people. The Court upheld the status quo. In a narrow interpretation, it asserted that the system is not a “digital ID system” because it functions primarily offline.

That reasoning creates a dangerous legal fiction. Even if citizens use a physical card, the legal and administrative weight sits in the centralized register behind it. Section 65(1) of the Registration of Persons Act (ROPA) makes the National Identification Register a primary data source for accessing essential services such as the Social Assistance Grants for Empowerment (SAGE) and public healthcare.

This means the system can be treated as “offline” in court while still operating as a digital gateway in practice.

The problem is becoming more politically urgent as the 2026 general elections approach. The government’s use of voter location slips as a workaround for people failed by the identity system is a quiet admission that the underlying infrastructure is too fragile to fully trust with democratic participation.

From Kampala courtrooms to registration queues, the practical definition of citizenship is now tethered to a system that can default to exclusion.

2. The Human Impact: Living as a “Ghost”

To understand the fairness problem, we need to look beyond algorithms and into administrative execution. In Uganda, digital exclusion is not just a missing card. It can mean a total suspension of civil life.

When the system fails to count a person, that person becomes a “ghost”: physically present, but deleted from the socio-economic fabric of the nation.

- Asingwire Phemia, a Makerere University student, was blocked from transactions for months because she lacked an ID. Her breakthrough reportedly came not through a formal recourse pathway, but through informal personal contacts.

- Jackson Ampiire, a boda boda rider, faced exclusion from credit and formal economic participation because he lacked a National Identification Number (NIN).

- Asiimwe Derick, a driver, faced livelihood consequences when permit renewal was rejected because of NIRA data errors.

- The Maragoli community, present in Uganda since the 18th century and previously recognized as lawful occupants in government correspondence, now face a deeper identity crisis because they are not listed in the Constitution’s 3rd Schedule.

For the Maragoli, the ID system is not just inconvenient. It can deepen statelessness. Without recognition, people struggle to obtain IDs. Without IDs, they struggle to prove land ownership or seek legal redress. That makes them more vulnerable to eviction and land pressure from commercial agribusiness.

| Stated purpose of Ndaga Muntu | Actual exclusionary outcome |

|---|---|

| Universal access to health services and social grants such as SAGE | Poor and elderly citizens can be turned away from clinics or grants for lacking the required card or data match |

| Legal security through reliable identification | Communities such as the Maragoli can be rendered stateless and vulnerable to land dispossession |

| Modernization of financial inclusion and school enrollment | Students and informal workers can be blocked from basic tools of social mobility |

These harms begin long before a card reaches a citizen’s hand. They begin in registration, data entry, printing capacity, correction processes, and legal design.

3. Lifecycle Failure: From Data Entry to Printing Press

The Ndaga Muntu crisis is a lifecycle failure. Administrative neglect at one stage cascades into exclusion at the next.

| Lifecycle phase | What went wrong | Fairness consequence |

|---|---|---|

| Enrollment | Uganda targeted about 18 million people for enrollment and renewal, with about 13 million reportedly registered | Registration progress did not translate into usable identity for everyone |

| Card production | Only about 1.7 million physical cards were reportedly printed from that large registration pool | More than 11 million people risk being stuck in “registered but unrecognized” limbo |

| Data quality | Errors from earlier registration drives, especially between 2014 and 2017, continue to haunt the registry | Misspelled names and incorrect dates of birth become long-term barriers to service access |

| Renewal and correction | Over 10,000 IDs were reportedly rejected due to false or mismatched data | Citizens are pushed into correction cycles that can be slow, costly, and livelihood-threatening |

| Fraud control | In 2022, about 6,000 false identities were reportedly found in Kiryandongo, Rhino, and Bidi Bidi settlements | Real citizens face hurdles while insiders can exploit registry weaknesses for benefit fraud |

The most important lesson is that identity systems do not need a sophisticated AI model to produce algorithmic harm. A brittle registry can generate the same outcome: automated exclusion at scale.

When a misspelled name, wrong date of birth, or missing tribe classification becomes the difference between recognition and erasure, the system is not merely inefficient. It is structurally unfair.

4. Bias Types: Representation and Structural Exclusion

In Uganda’s national ID system, bias is not only found in software. It is also embedded in law, categories, and administrative workflows.

Representation Bias

The Constitution’s 3rd Schedule recognizes 65 ethnic groups. The exclusion of the Maragoli means that the identity system can automate their marginalization. People may be told to register under another tribe to obtain documents, but that “solution” forces the erasure of cultural identity.

This is representation bias in its deepest form: the system cannot fairly represent a group because the legal categories behind the system do not recognize them.

Economic and Class Bias

Making a NIN mandatory for SAGE grants, public health, finance, education, or permits shifts the burden onto the people least able to navigate delays. Poor citizens, elderly people, informal workers, and rural residents face the highest cost when documents are missing or wrong.

A single name mismatch can become an economic sentence.

Ethnic Favoritism and Informal Infrastructure

Phemia’s story shows how broken formal systems can push people into informal infrastructure. If access depends on personal contacts, tribe mates, or someone who knows how to bypass the queue, then the right to identity becomes unevenly distributed.

That kind of workaround is not resilience. It is evidence of institutional failure.

Corruption Bias

When the formal pathway is slow or unreliable, predatory intermediaries can become the interface between citizens and the state. The story of “Godfrey,” who was reportedly approached by a person with insider knowledge of NIRA systems to buy back a lost ID, illustrates the corruption risk created by bureaucratic scarcity.

In this environment, the system does not only exclude. It can also convert identity into a black-market commodity.

5. The Global South Lens: The Infrastructure Gap

Uganda’s experience highlights a wider problem in Digital Public Infrastructure: the tension between mandatory integration and universal access.

The High Court’s “offline” reasoning may describe how a person physically presents an ID card. It does not describe how power actually flows through the system. The backbone remains a centralized registry that determines whether a person is legible to the state.

In lower-connectivity and high-corruption settings, mandatory digital identity can create a vacuum. When the official system fails, citizens search for whoever can make the system work. That vacuum is filled by informal payments, personal connections, tribal networks, and insider brokers.

This is why the Uganda case matters beyond Uganda. It shows that digital public infrastructure is not automatically inclusive. If the foundational layer is legally mandatory but operationally unreliable, it can make exclusion more systematic, not less.

6. The Bigger Picture: Why the World Should Care

Why should a professional in London, New York, or Nairobi care about a court ruling in Kampala?

Because digital invisibility can serve global economic interests. If the Maragoli cannot obtain IDs, cannot prove land ownership, and cannot easily access courts, then their vulnerability can make land acquisition easier for powerful commercial actors. The article highlights concerns involving multinational agribusinesses such as Agilis Partners and Great Season, where documentation gaps can make ancestral land claims harder to defend.

The broader lessons are clear:

- Mandatory integration is a human rights risk. When digital ID becomes the only route to food, health, finance, or education, technical failure becomes a form of state violence.

- DPI can become a tool for disenfranchisement. Without safeguards, centralized databases can become political levers during sensitive periods such as the 2026 elections.

- Digital rights are human rights. Legal frameworks such as ROPA must be tested against constitutional protections such as Article 21.

- Identity systems must support correction and appeal. A database error should never become a permanent civil status.

- Recognition must come before integration. Communities that are not legally recognized cannot be fairly integrated into digital identity systems.

As the world rushes to digitize identity, Uganda’s Ndaga Muntu raises a hard question: are we building systems to empower citizens, or are we perfecting the machinery required to make inconvenient people disappear?

References

- Initiative for Social and Economic Rights (ISER). (June 20, 2025). “The High Court Fails to Recognize the Urgent Need for Legal Reform Toward an Inclusive National Digital ID System.” Press Statement.

- Muvunyi, Talent Atwine and Jjumba, Muhammad. (January 8, 2026). “Without an ID, you are a ghost.” The Observer.

- Landinfo. (February 12, 2025). “Uganda: Asylum seekers and refugees: Registration, documentation and other aspects.” Report.

- ArcGIS StoryMaps. (March 11, 2022). “The Maragoli of Uganda: Pushing Back Against Human Rights Violations.”

- National Identification and Registration Authority (NIRA). “Press release on mass enrolment and renewal exercise.”

- ARATEK. “Uganda National ID Explained: From Registration to Renewal.” Smart ID technical overview.

- Constitution of the Republic of Uganda (1995), Article 21 and the 3rd Schedule.

- Registration of Persons Act (ROPA), Section 65(1).

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- Kenya’s Digital ID Crossroads: How Huduma Namba and Maisha Namba Risk Exclusion by Design

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

AI’s Green Promises: A Deceptive Mirage of Sustainability

Why efficiency-focused 'Green AI' claims often hide deeper environmental, social, and governance costs—especially in the Global South.

The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.

Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.