The Fairness Trap in AI-Driven Maternal Healthcare: Zambia's DawaMom Case

How Zambia's DawaMom case reveals the fairness risks of AI-driven maternal healthcare when open-source data, biomedical defaults, and infrastructure gaps fail to reflect local realities.

AI Fairness 101 - Real-World Incidents

Part 13 of 13

Table of Contents

- 🎥 Explained: The Fairness Trap in AI-Driven Maternal Healthcare

- 🧵 AI Fairness 101 — Real-World Incident #13: Zambia’s DawaMom Maternal Health Case

- 🔍 The Fairness Trap in AI-Driven Maternal Healthcare

- 1. What Happened: The DawaMom Case

- 2. The Human Impact: Beyond Clinical Metrics

- 3. Lifecycle Failure: The Engineering of Inequality

- 4. Bias Types: A Diagnostic Taxonomy

- 5. The Global South Lens: Infrastructure and Epistemic Justice

- 6. The Bigger Picture: From Silicon Valley Dreams to Global Health Justice

- 7. The 48-Hour Challenge

- References

- Related in this cluster

🎥 Explained: The Fairness Trap in AI-Driven Maternal Healthcare

🧵 AI Fairness 101 — Real-World Incident #13: Zambia’s DawaMom Maternal Health Case

A case study in how AI for maternal healthcare can reproduce exclusion when clinical accuracy is optimized before local knowledge, infrastructure, and trust are treated as design requirements.

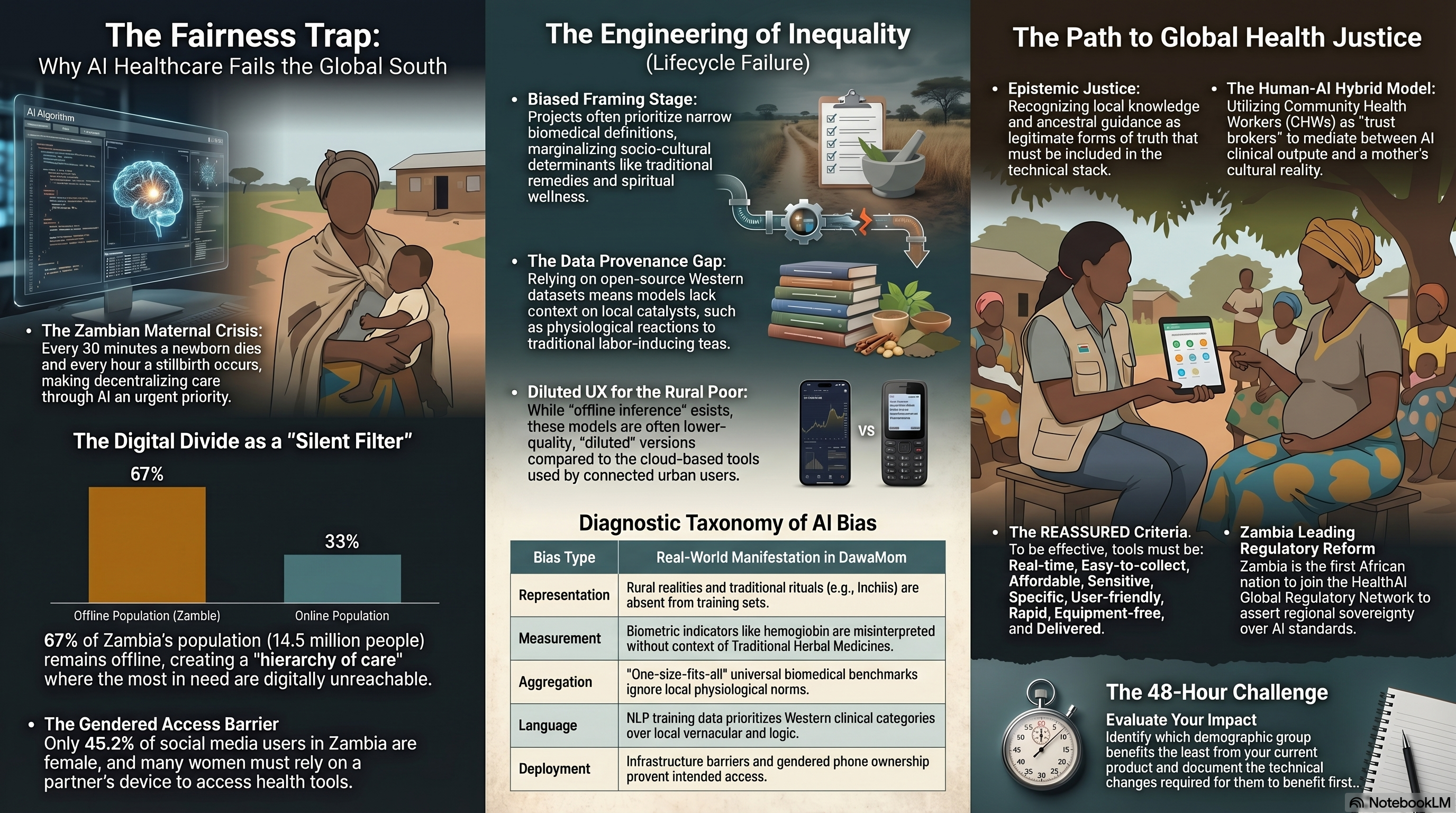

🔍 The Fairness Trap in AI-Driven Maternal Healthcare

- 🛠️ System used: DawaMom, a maternal health platform from Dawa Health using patient, Community Health Worker, and clinician-facing layers

- 👥 Most affected group: rural, low-income, low-literacy, and offline pregnant women whose care practices may combine clinical medicine, traditional remedies, and family guidance

- ⚠️ Core failure: AI models and health workflows risked centering digitized biomedical data while missing indigenous maternal knowledge, infrastructure limits, and gendered access to devices

- 📉 Outcome: the same tools designed to expand maternal care could create a hierarchy of care in which connected urban users receive richer support than mothers facing the highest risk

⚠️ Key Takeaway

An AI health system is not fair just because its model performs well in a test set. If its data pipeline does not reflect local realities, its accuracy can become a polished surface over a deeper exclusion problem.

Sub-Saharan Africa has become a major proving ground for the “AI for Good” movement, especially in digital health. The need is real. In Zambia, maternal and newborn health risks remain urgent, and AI-assisted tools promise to extend scarce clinical expertise into communities that traditional health systems struggle to reach.

But the DawaMom case shows why technical ambition is not enough. In maternal healthcare, fairness is not only a question of whether a model detects anemia, flags cervical cancer risk, or supports an ultrasound workflow. It is also a question of whose knowledge counts, whose body is treated as normal, and whose infrastructure is assumed by default.

1. What Happened: The DawaMom Case

DawaMom was developed as a layered maternal health platform intended to support pregnant women, Community Health Workers, and clinical practitioners.

- The patient-facing interface includes GogoGemma AI, built with services such as AWS Lex, Lambda, and Kendra, and integrated with Gemini-powered Large Language Models through Retrieval-Augmented Generation.

- The platform supports frontline maternal care through a Community Health Worker portal and a clinical dashboard for practitioners.

- Diagnostic support includes computer vision for anemia screening through lower-eyelid hemoglobin estimation, Vision Transformer-based cervical cancer screening, and AI-assisted “blind sweep” ultrasound workflows.

- The system supports seven languages: Shona, Ndebele, Nyanja, Tonga, Bemba, Lozi, and English.

On paper, this looks like a powerful example of digital health innovation. The technical stack combines conversational AI, retrieval systems, computer vision, and clinical workflow support. Yet the Mozilla Foundation’s Incomplete Chronicles research identified a deeper fairness problem: a provenance gap.

When digitized, context-rich local health data is scarce, developers may rely on standardized open-source repositories such as Kaggle. That can help teams prototype quickly, but it also creates a dangerous mismatch. Data collected under Western biomedical assumptions may not reflect Zambian maternal realities, traditional herbal medicine use, family decision-making, or local understandings of wellness.

As the Mozilla Foundation research argues:

“For AI to truly improve healthcare in underserved communities, it must reflect local realities.”

That sentence is the hinge of the case. Diagnostic accuracy is not a sufficient fairness metric if the data pipeline itself is stripped of local context.

2. The Human Impact: Beyond Clinical Metrics

AI health tools often report success through technical performance: accuracy, sensitivity, specificity, response time, or model benchmark scores. Those metrics matter. But they do not tell the whole story.

For DawaMom, real-world impact is shaped by what the incident report calls a silent filter: infrastructure, culture, trust, and device access.

- Exclusion by design: In Zambia, a large share of the population remains offline. For mothers without reliable connectivity, AI-enabled care can become physically and digitally unreachable.

- Trust erosion: When AI recommendations ignore ancestral guidance, traditional remedies, or “organic wellness” practices, users may begin to discount the entire system.

- Uneven benefit distribution: Urban, connected users are more likely to access richer multimodal features, while rural mothers may receive a diluted text-only experience.

- Gendered access constraints: Women may not always own or control the phone used to access maternal health tools, making privacy and continuity of care fragile.

The fairness harm is not that digital health exists. The harm is that the richest version of the system may be available to the people already closest to formal care, while the lowest-bandwidth version reaches those facing the greatest maternal health risk.

That creates a hierarchy of care: cloud-enabled diagnostics for some, thin digital advice for others.

3. Lifecycle Failure: The Engineering of Inequality

The DawaMom case is best understood as a lifecycle failure. The fairness risks emerge across framing, data, modeling, user experience, and deployment.

| Lifecycle phase | What went wrong | Fairness consequence |

|---|---|---|

| Problem framing | Maternal knowledge was framed primarily through biomedical variables such as blood pressure, hemoglobin, and clinical risk markers | Traditional remedies, spiritual wellness, and family-led delivery preparation were treated as peripheral rather than core context |

| Data pipeline | Local data scarcity pushed the system toward standardized open-source sources and externally legible datasets | The model risked learning a version of maternal health that did not match the lived reality of Zambian users |

| Measurement design | Biometric indicators stood in for holistic wellbeing | Physiological reactions to traditional herbal medicines could be interpreted as generic clinical disorders |

| Modeling and representation | Standardization was treated as a path to scale | Complex care practices such as Inchila rituals or Mono root use became difficult to encode and easy to omit |

| UX and infrastructure | The system’s best performance depended on connectivity, smartphones, and cloud access | Rural users could receive a lower standard of care through constrained offline or text-only workflows |

This is why fairness failures are rarely accidental. They are often the result of reasonable-seeming engineering choices that become harmful because they are made under unequal conditions.

If a team standardizes to scale, it also decides what gets averaged out.

4. Bias Types: A Diagnostic Taxonomy

The DawaMom case maps clearly onto the bias framework developed by Harini Suresh and John Guttag. The value of this taxonomy is that it lets us locate the harm rather than treating “bias” as a vague label.

| Bias type | Real-world manifestation in DawaMom |

|---|---|

| Representation bias | Rural realities, traditional rituals, and locally used remedies such as Mono root or Inchila are absent or underrepresented in training and evaluation data |

| Measurement bias | Hemoglobin and other clinical markers are used as proxies for maternal wellbeing, while local care practices and herbal medicine effects remain invisible |

| Aggregation bias | Universal biomedical benchmarks are applied across diverse Zambian populations as if one physiological norm fits all users |

| Language and interaction bias | Western clinical categories can dominate NLP behavior even when the interface supports local languages |

| Deployment bias | Connectivity gaps, gendered phone access, and uneven Community Health Worker coverage shape who can actually benefit from the tool |

The key insight is simple: bias is the shadow cast by a scaling strategy.

The moment a system is standardized for broad deployment, it decides which populations are legible enough to model and which realities are too messy to include.

5. The Global South Lens: Infrastructure and Epistemic Justice

In the Global South, fairness cannot be separated from infrastructure. A system designed around an imagined “default user” who is urban, connected, literate, and clinically oriented will not serve the full population equally.

The deeper issue is epistemic justice: the recognition of local knowledge as a legitimate form of truth.

In Zambia, many pregnant women use Traditional Herbal Medicines and rely on maternal family networks, including grandmothers and aunts, for delivery preparation. If an AI health system ignores these practices, it is not merely incomplete. It may become unsafe, because it can misread local behavior as noncompliance, misinformation, or abnormal physiology.

This is what “colonial residue” means in AI health systems. It is not only about whether a chatbot can translate words into Bemba, Tonga, or Nyanja. It is about whether the system’s underlying logic treats Western biomedical categories as the only legitimate way to understand pregnancy, risk, and care.

Language support without epistemic support is not enough.

6. The Bigger Picture: From Silicon Valley Dreams to Global Health Justice

The path forward is not to abandon AI in maternal healthcare. The need for better support is too urgent, and the promise of responsible digital health is real. The lesson is that fairness must be managed as a continuous lifecycle practice, not a launch slogan.

Standards such as the NIST AI Risk Management Framework and UNESCO’s Recommendation on the Ethics of Artificial Intelligence push in this direction by treating fairness, accountability, and human oversight as ongoing responsibilities.

For maternal health AI in Zambia, a more just approach would include:

- Build local data governance processes that include mothers, midwives, Community Health Workers, and traditional knowledge holders.

- Treat traditional maternal practices as context to be understood, not noise to be removed.

- Evaluate tools across rural, low-bandwidth, low-literacy, and shared-device conditions before claiming broad impact.

- Use Community Health Workers as trust brokers who can mediate between clinical outputs and cultural realities.

- Design offline and low-connectivity workflows as first-class experiences rather than reduced versions of the product.

- Create escalation, appeal, and correction pathways when AI advice conflicts with local knowledge or lived experience.

Zambia’s participation in the HealthAI Global Regulatory Network also matters. It signals a chance for countries in the Global South to shape AI health governance from within their own operating realities rather than simply importing standards from elsewhere.

The strategic question is not whether AI can improve maternal health. It can. The harder question is whether the system has been designed so that the mothers facing the most risk benefit first.

7. The 48-Hour Challenge

Evaluate a product you currently work on, fund, or oversee. Identify the demographic group that benefits the least from its current design.

Within the next 48 hours, document in writing exactly what structural or technical changes would be required for that group to benefit first.

Then ask the uncomfortable follow-up: if that change is technically possible, why has it not already been prioritized?

References

- Mozilla Foundation. (2024). Addressing AI Bias in Maternal Healthcare in Southern Africa. https://www.mozillafoundation.org/en/blog/addressing-ai-bias-in-maternal-healthcare-in-southern-africa/

- Mozilla Foundation. (2024). Incomplete Chronicles: Unveiling Data Bias in Maternal Health. https://www.mozillafoundation.org/en/research/library/incomplete-chronicles-unveiling-data-bias-in-maternal-health/

- Mail & Guardian. (2025). Cultural barriers may limit AI’s success in maternal healthcare in Africa. https://mg.co.za/health/2025-01-07-cultural-barriers-may-limit-ais-success-in-maternal-healthcare-in-africa/

- HealthAI Agency. (2025). Zambia Becomes First African Country to Join HealthAI Global Regulatory Network. https://healthai.agency/zambia-first-african-country-joins-healthai-grn/

- Dawa Health. (2026). Decentralized Healthcare Platform Overview. https://dawa-health.com/

- DS4Health Africa. (2025). Zambia’s Dawa Health Wins Google-Backed Ideathon. https://thenextafrica.com/zambias-dawa-health-wins-google-backed-ideathon-with-ai-breakthrough-for-womens-healthcare/

- National Institute of Standards and Technology. (2023). AI Risk Management Framework (AI RMF 1.0). https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

- UNESCO. (2021). Recommendation on the Ethics of Artificial Intelligence. https://www.ohchr.org/sites/default/files/2022-03/UNESCO.pdf

- Suresh, H., & Guttag, J. V. (2019). A Framework for Understanding Unintended Consequences of Machine Learning. https://arxiv.org/abs/1901.10002

- World Journal of Advanced Research and Reviews. (2025). Harnessing AI to improve maternal outcomes in Sub-Saharan Africa. https://doi.org/10.30574/wjarr.2025.27.1.2642

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- The Ghost in the Machine: Uganda’s Ndaga Muntu and the High Cost of Digital Identity

- Kenya’s Digital ID Crossroads: How Huduma Namba and Maisha Namba Risk Exclusion by Design

- The Optum Proxy Bias against Black Patients

- United Health nh-predict algorithm bias in healthcare against Elderly patients

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

The Mobley v. Workday lawsuit represents a landmark shift in legal accountability, establishing that AI software vendors can be held liable as agents for discriminatory hiring practices that exclude qualified candidates. The case highlights how black box algorithms can systematically penalize individuals based on race, age, and disability through biased training data and the use of neutral proxies. This legal evolution signals a broader mandate for Accountability by Design, requiring employers and developers to ensure transparency and human oversight in automated recruiting systems

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.