Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.

AI Fairness 101 - Real-World Incidents

Part 2 of 4

Table of Contents

- 🧵 AI Fairness 101 — Real-World Incident #2: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- 🎥 Explained: Aadhaar, Exclusion, and Algorithmic Harm**

- 🔍 Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- 🧠 1. What Happened: From Vision to Reality

- 👨👩👧👦 2. The Human Impact: When the System Says “No”

- 🧭 3. Lifecycle Failure: Where the System Broke Down

- 🎭 4. Bias Types: A Deeper Look at Algorithmic Injustice

- 🌍 5. The Global South Lens: A Model or a Warning?

- 📌 6. The Bigger Picture: Technology vs Human Dignity

- 📚 References

- 📥 AI Fairness 101 — Real-World Incidents: Aadhaar Exclusions Case Deck (PDF)

- 🔎 Explore the AI Fairness 101 Series

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

- Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

🧵 AI Fairness 101 — Real-World Incident #2: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

🎥 Explained: Aadhaar, Exclusion, and Algorithmic Harm**

🔍 Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

When India launched the Aadhaar project, it was heralded as a monumental leap into a digital future. The vision was breathtakingly ambitious: to equip over a billion people with a unique digital identity, streamlining welfare, eliminating rampant fraud, and creating a modern digital backbone for the world’s largest democracy.

This wasn’t just a domestic policy; it was positioned as a global model for digital governance — a high-tech solution that could lift millions out of poverty by ensuring benefits reached those who needed them most.

At its core, Aadhaar is the world’s largest biometric identification system. It collects fingerprints, iris scans, and facial photographs, linking them to a unique 12-digit number issued to every resident. This number was designed to be a single, irrefutable proof of identity, intended to simplify everything from opening a bank account to receiving government subsidies.

However, the chasm between the system’s stated goals and its real-world implementation quickly became a source of national tension. What began as a tool for inclusion rapidly transformed into a gatekeeper for essential rights.

🧠 1. What Happened: From Vision to Reality

| Stated Goal | Mandatory Reality |

|---|---|

| To prevent fraud and “leakages” in welfare programs, ensuring benefits reached genuine recipients. The government claimed savings of ₹2.7 lakh crore and elimination of over 5 crore fake ration cards. | The Aadhaar Act of 2016 (Section 7) and a 2017 government notification made Aadhaar mandatory for accessing hundreds of essential public services, including the Public Distribution System (PDS) for subsidized food rations. |

The irony was profound. A system designed to prevent the “leakage” of small-scale welfare benefits inadvertently created new attack surfaces for large-scale criminal fraud.

- In Uttar Pradesh, Aadhaar was used to defraud people of their PDS rations.

- In Madhya Pradesh, it enabled cheating in police recruitment exams.

- Most brazenly, a fraudster named Imran Ali created an entirely fake biometric identity using toe prints, then used it to scam victims out of ₹26 crore.

This digital “cure-all” was showing symptoms of a serious illness.

A system designed to see everyone was rendering the most vulnerable completely invisible.

👨👩👧👦 2. The Human Impact: When the System Says “No”

To understand Aadhaar, you must leave policy documents and enter villages, slums, and remote communities. The true test of technology is not scale, but how it treats the weakest.

The failures became human tragedies:

- Jasoda Munda (47) lost rations for months because her biometrics “did not work.”

- Bonjh Hembrom (88) had fingerprints worn smooth by manual labor. No scan, no food.

- Sukra Oram (83) was denied rations and pension for 16 months before dying.

- Santoshi Kumari (11) died after her family’s ration card was delinked.

- Arjun Hembram (11) starved after a name mismatch cut off food for two years.

These were not glitches. They were death sentences by database error.

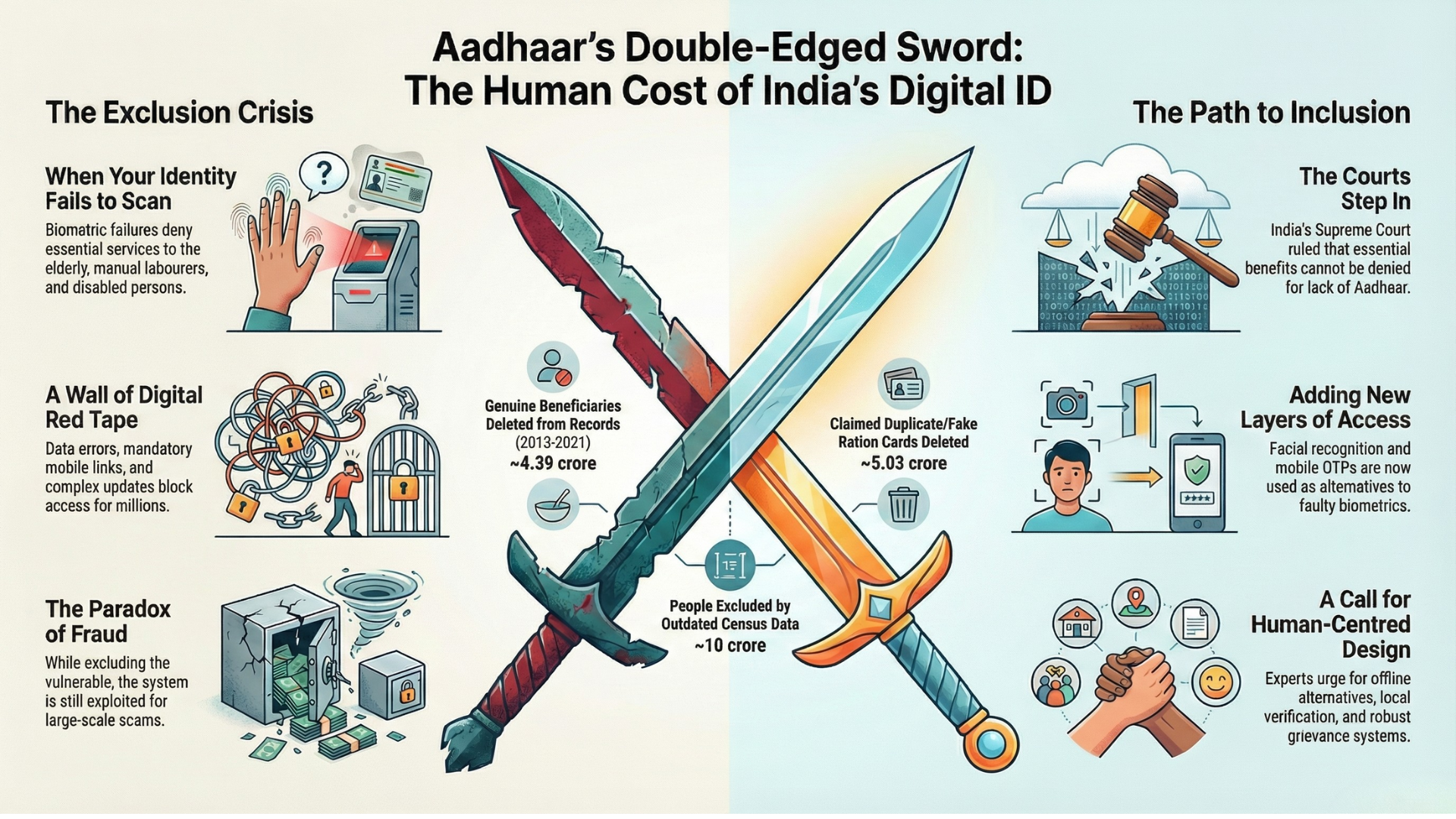

The Scale of Exclusion

These were not isolated incidents. The numbers reveal systemic failure:

- 10 crore people excluded from food security due to Census delays.

- 4.39 crore people deleted from PDS between 2013–2021.

- 28% of mothers applying for maternity benefits had payments misdirected.

For millions, a system meant to deliver life-saving aid became an impenetrable barrier.

🧭 3. Lifecycle Failure: Where the System Broke Down

Failures in large systems are rarely single bugs. They emerge across the entire lifecycle.

Aadhaar failed at multiple stages.

1. Flawed by Design (Problem Formulation)

Aadhaar was framed around fraud prevention, not universal inclusion.

Any reduction in beneficiaries was labeled as “savings.” This created a perverse logic:

Denying a genuine poor person became more acceptable than allowing a fake one.

Efficiency was prioritized over the fundamental right to food.

2. Deficient from the Start (Data & Enrollment)

A 2022 audit by India’s Comptroller and Auditor General found:

- Aadhaar numbers were issued even with poor-quality biometrics.

- UIDAI took no responsibility for faulty data capture.

- Citizens bore the burden and cost of fixing system errors.

The system’s mistakes became the citizen’s problem.

3. Broken in Practice (Deployment & Implementation)

Supreme Court-mandated “exception handling” existed mostly on paper. In tribal districts, most mobile Aadhaar enrollment units were non-functional. The people who needed the system most could not access it at all.

On the ground:

- Biometric machines failed.

- Internet was unreliable.

- Power outages were routine.

4. Unaccountable in Failure (Monitoring & Redressal)

Grievance systems collapsed under scale:

- Thousands of unresolved cases at UIDAI.

- Elderly citizens asked to travel kilometers to fix biometric failures.

For many, a failed scan became a final verdict with no appeal.

🎭 4. Bias Types: A Deeper Look at Algorithmic Injustice

Bias is not malice. Bias is systemic unfairness baked into design choices.

Aadhaar demonstrates three core bias types.

1. Framing Bias

The problem was framed as:

“Catch fraudsters”

Instead of:

“Guarantee food and dignity”

This trade-off made starvation an acceptable error.

2. Performance Bias

Biometrics failed for:

- Elderly with worn fingerprints.

- Manual laborers.

- People with cataracts or disabilities.

The technology worked best for middle-class bodies.

It was universally mandated but not universally reliable.

3. Systemic & Exclusionary Bias

Aadhaar assumed:

- Everyone has documents.

- Everyone has phones.

- Everyone can travel to offices.

This excluded:

- Tribal communities (low birth registration).

- Women (low phone ownership).

- Migrant workers.

- The disabled and elderly.

The system amplified existing inequality.

🌍 5. The Global South Lens: A Model or a Warning?

India now exports Aadhaar as Digital Public Infrastructure. But for the Global South, this raises dangerous questions.

1. Welfare Recipients as a Testbed

Biometric systems are first tested on welfare recipients because:

They cannot refuse.

The poorest become experimental subjects.

2. Amplifying the Digital Divide

In India:

- 45% lack internet access.

- Only 12% are computer literate.

Digital-first welfare becomes digital exclusion.

3. The “Kagaz” Problem

Formal documentation is rare in informal economies.

In Bihar:

- Only 3.7% of births were registered in 2000.

Digital ID demands paperwork many never had.

4. The High Stakes of Identity

Digital ID quickly becomes political:

- Citizenship.

- Voting rights.

- Migration status.

Identity infrastructure becomes a tool of governance and control.

📌 6. The Bigger Picture: Technology vs Human Dignity

Aadhaar exposes a central conflict:

Efficiency vs Fundamental Rights

The lessons are stark.

1. Rights Cannot Be Contingent on Technology

Justice Chandrachud warned:

Dignity cannot depend on algorithms or probabilities.

2. Accountability Must Be Built In

When systems fail:

- The burden must be on the state, not citizens.

- Redress must be accessible, fast, and offline-capable.

No person should starve because a scanner failed.

3. A Story of Course Correction

There have been real improvements:

- Face authentication for elderly.

- Home enrollment for disabled citizens.

- Alternative ID pathways.

Technology can correct itself — but only after damage is done.

The ghosts in India’s digital machine are not fake identities. They are real people rendered invisible. As nations rush to digitize governance, Aadhaar forces one unavoidable question:

What is the acceptable human cost of efficiency — and who gets to decide?

📚 References

Judicial and Legal Sources

-

Supreme Court of India (2018).

Justice K.S. Puttaswamy (Retd.) vs Union of India — Aadhaar Constitution Bench judgment, including Justice D.Y. Chandrachud’s dissent on dignity, exclusion, and technological fallibility. -

Supreme Court of India (2023).

Pragya Prasun & Ors. vs Union of India — Ruling on reasonable accommodation and the obligation of digital systems to adapt to human diversity.

Government Audits and Official Reports

-

Comptroller and Auditor General of India (2022).

Performance Audit on the Functioning of Unique Identification Authority of India (UIDAI) — Findings on poor-quality biometric capture, enrollment deficiencies, and lack of accountability. -

Ministry of Consumer Affairs, Food & Public Distribution (Various years).

Public Distribution System (PDS) beneficiary data and deletion statistics.

Academic and Policy Research

-

Khera, Reetika (2019).

Dissent on Aadhaar: Big Data Meets Big Brother.

A comprehensive academic critique of Aadhaar’s design, framing bias, and exclusionary impacts. -

Dreze, Jean & Khera, Reetika (2017–2021).

Field studies and empirical work documenting Aadhaar-linked exclusion, starvation deaths, and welfare access failures. -

Masiero, Silvia (2020).

Research on biometric identification systems, welfare governance, and exclusion in low-capacity settings.

Investigative Journalism and Civil Society Documentation

-

Scroll.in, The Wire, Indian Express (2017–2023).

Investigative reporting on Aadhaar-linked starvation deaths, pension failures, and PDS exclusions across Jharkhand, Odisha, Rajasthan, and Chhattisgarh. -

Right to Food Campaign (India).

Ground-level documentation of welfare exclusion, ration denial, and grievance redress failures linked to Aadhaar authentication.

Digital Identity and Global Context

-

World Bank Group (2019).

ID4D: Principles on Identification for Sustainable Development — Global standards and risks associated with digital ID systems. -

Privacy International & Electronic Frontier Foundation (Various).

Analysis of biometric systems, welfare surveillance, and rights impacts in the Global South.

This reference list prioritizes primary legal rulings, official audits, peer-reviewed research, and documented field evidence to ensure accuracy and accountability.

📥 AI Fairness 101 — Real-World Incidents: Aadhaar Exclusions Case Deck (PDF)

👉 Download the Adhaar Exclusion Case Deck (PDF)

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 — Real-World Incidents learning track.

Stay tuned — new posts every week!

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A deep dive into Michigan’s MiDAS unemployment fraud algorithm — and how design-phase failures, automation bias, and the removal of human oversight turned efficiency into injustice.

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.