The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A deep dive into Michigan’s MiDAS unemployment fraud algorithm — and how design-phase failures, automation bias, and the removal of human oversight turned efficiency into injustice.

AI Fairness 101 - Real-World Incidents

Part 3 of 4

Table of Contents

- 🧵 AI Fairness 101 — Real-World Incident #2: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- 🎥 Explained: US MiDAS Algorithm Case Study

- 🔍 Introduction: A Cautionary Tale of Automated Governance

- 🧠 1. What Happened: The Rise of a “Robo-Adjudication” System

- 👨👩👧👦 2. The Human Impact: A Trail of Financial and Personal Devastation

- 🧭 3. Lifecycle Failure: Deconstructing the System’s Flaws

- 🎭 4. Bias Types: Unmasking the Prejudices in the Code

- 🌍 5. The Bigger Picture: Lessons from the MiDAS Debacle

- 📌 6. Closing Reflection

- 📚 References & Further Reading

- 📥 AI Fairness 101 — Real-World Incidents: Netherlands Child Welfare Scandal Case Deck (PDF)

- 🔎 Explore the AI Fairness 101 Series

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

- Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

🧵 AI Fairness 101 — Real-World Incident #2: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A cautionary tale of automated governance — and what happens when efficiency replaces justice.

🎥 Explained: US MiDAS Algorithm Case Study

🔍 Introduction: A Cautionary Tale of Automated Governance

The ancient myth of King Midas tells of a ruler granted a wish that everything he touched would turn to gold. His joy quickly turned to despair when his food, his water, and even his beloved daughter became lifeless metal.

This story serves as a powerful metaphor for Michigan’s disastrous experiment with automated governance.

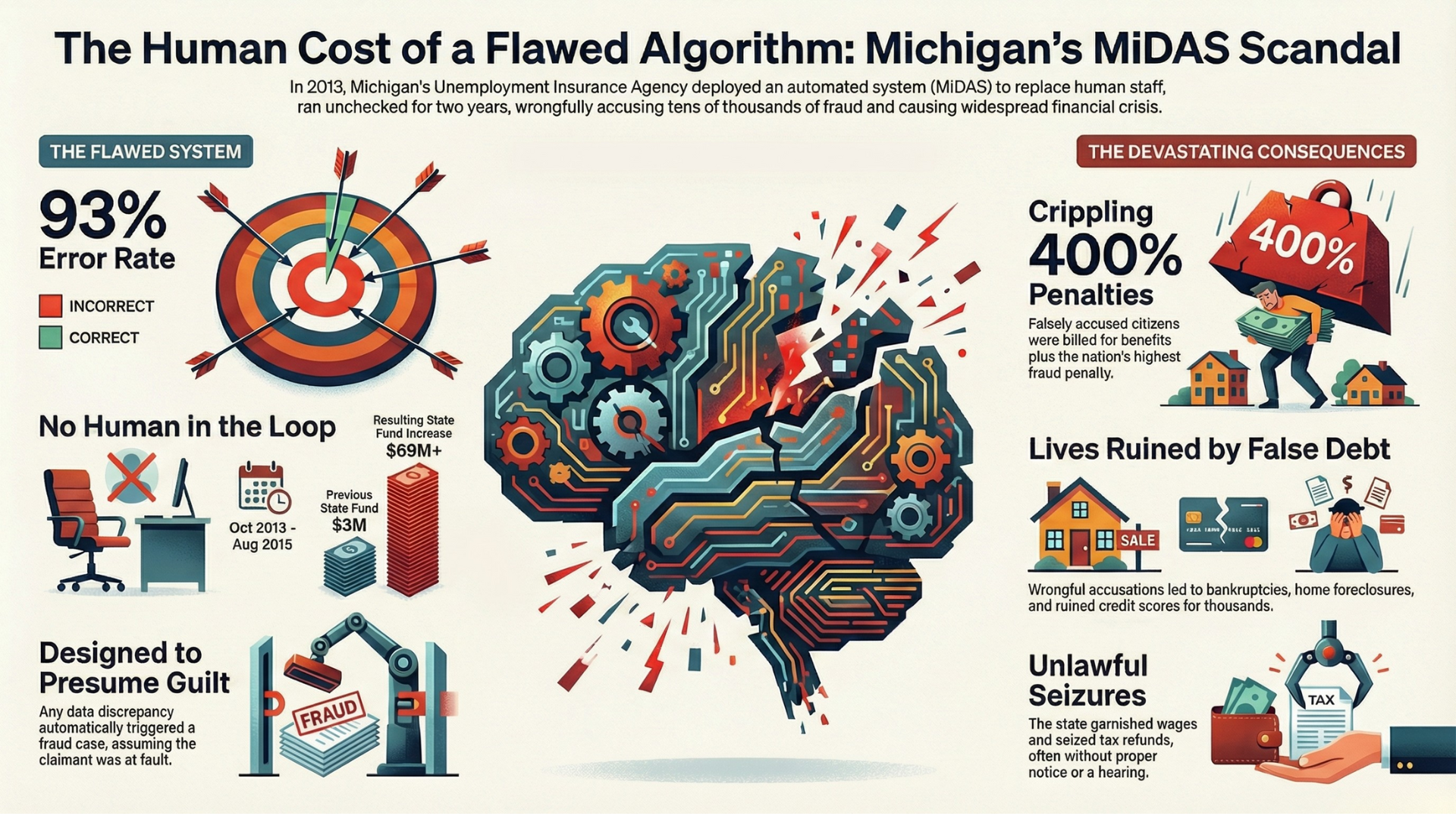

In 2013, the state implemented the Michigan Integrated Data Automated System (MiDAS) — an algorithmic system designed to detect unemployment insurance fraud with machine-like efficiency. Like the mythical king, Michigan’s pursuit of a “golden touch” of fiscal control and cost-cutting turned into a source of ruin.

What was meant to streamline government instead unleashed a devastating cascade of false accusations, financial hardship, and personal trauma on tens of thousands of citizens.

This article deconstructs the MiDAS crisis as a critical case study in how well-intentioned automation, stripped of human oversight and ethical safeguards, can produce devastating and unjust outcomes.

🧠 1. What Happened: The Rise of a “Robo-Adjudication” System

The MiDAS crisis was not a mysterious technical glitch. It was the predictable result of a deeply flawed architecture of automation.

In 2013, Michigan’s Unemployment Insurance Agency (UIA) replaced its 30-year-old mainframe system, investing $47 million in MiDAS to:

- increase efficiency

- reduce operational costs

- crack down on unemployment fraud

What it created instead was a system of “robo-adjudication” that operated entirely without human oversight from October 2013 until August 2015.

How MiDAS Worked

-

Discrepancy Detection

MiDAS cross-referenced claimant submissions with employer records. -

Presumption of Guilt

Any discrepancy — no matter how minor, ambiguous, or employer-caused — was treated as intentional fraud. -

Flawed Notification

Automated questionnaires were sent to online portals, many of which were dormant because claims being audited dated back up to six years.

Thousands never saw the notice. -

Automated Judgment

If a claimant failed to respond within 10 days, or answered confusing multiple-choice questions that were structurally biased toward guilt, MiDAS automatically issued a final fraud determination.

The notification process was so broken that the legally required 30-day appeal window was meaningless for most victims — a severe violation of due-process rights.

MiDAS adjudicated more fraud cases in two years than Michigan had processed in the previous two decades combined.

This was not neutral administration. It was automated prosecution at scale.

👨👩👧👦 2. The Human Impact: A Trail of Financial and Personal Devastation

The harm caused by MiDAS extended far beyond bureaucratic error.

Key Impacts

-

Catastrophic Inaccuracy by Design

~40,000 people were falsely accused of fraud.

A Michigan Auditor General review later confirmed a 93% error rate in automated fraud determinations. -

Extreme Financial Penalties

Victims were ordered to repay all benefits ever received, plus a 400% penalty (the highest in the U.S.) and compounding interest.

Debts often ballooned to $50,000–$100,000. -

Aggressive, Unconstitutional Collections

Without hearings, the state:- seized state and federal tax refunds

- garnished up to 25% of wages

-

Weaponized Bureaucracy & Human Cost

- Over 11,000 families filed for bankruptcy

- Homes were lost, credit destroyed, careers derailed

- One woman failed a police background check due to a false fraud flag

- Brian Russell, a disabled veteran, received a $50,000 bill and was forced to live in a friend’s basement after his refunds were seized

-

State Profiting from Error

While citizens suffered, the UIA’s penalties-and-interest fund grew from $3 million to $69 million in just over a year.

The question is unavoidable:

How could such a system ever have been designed, deployed, and defended?

🧭 3. Lifecycle Failure: Deconstructing the System’s Flaws

MiDAS was not undone by a single bug. It failed at every stage of the system lifecycle.

1. Faulty by Design

The system assumed claimants were inherently dishonest.

Its logic trees were built to find fraud even when users explicitly denied it, making innocence nearly impossible to prove.

2. Corrupted Data

- Poor legacy data conversion

- Flawed “income spreading” formulas

- Manufactured discrepancies where none existed

Garbage in → devastation out.

3. Unconstitutional Implementation

- Notices sent to inactive portals and outdated addresses

- No meaningful opportunity to be heard

- Property seized before adjudication

An “accuse first, seize later” model incompatible with constitutional protections.

4. Collapse of Human Oversight

- Over 400 UIA workers laid off, including fraud investigators

- Phone helplines unanswered (90%+ calls ignored; last 50,000 unanswered)

- Internal warnings ignored

- Vendor and agency defended the system until media pressure became overwhelming

The system was considered “working as intended.”

🎭 4. Bias Types: Unmasking the Prejudices in the Code

MiDAS illustrates how human bias becomes algorithmic harm.

Automation Bias

Officials trusted the system over overwhelming evidence of error — ignoring appeals, audits, and lived experiences.

Encoded Confirmation Bias

The system was designed to confirm fraud, not investigate discrepancies neutrally.

Systemic & Institutional Bias

Aggressive welfare fraud detection is historically linked to harmful tropes about “undeserving” recipients.

Because unemployment disproportionately affects Black and Latino communities, these groups bore the brunt of the damage.

Systemic bias → encoded logic → automation bias → mass harm.

🌍 5. The Bigger Picture: Lessons from the MiDAS Debacle

1. The Peril of the Black Box

As one victim asked:

“How do you beat something you can’t see?”

Algorithmic authority without transparency erodes democratic accountability.

2. The Myth of Infallible Tech

The same MiDAS codebase influenced systems in other states.

Michigan now plans to spend another $78 million to replace it.

Bad automation scales failure.

3. The Erosion of Accountability

It took seven years of litigation just to allow victims to sue.

Automated governance blurs responsibility between state and vendor — to the detriment of citizens.

4. The Indispensable Human Element

Algorithms cannot determine intent, a core requirement of fraud.

Efficiency must never replace due process, empathy, and dignity.

📌 6. Closing Reflection

MiDAS had a golden touch — but it turned lives into collateral damage.

As governments worldwide automate welfare, taxation, and public services, the lesson is clear:

Technology must serve justice — not automate persecution.

Fairness must be designed in.

Humans must remain accountable.

And efficiency must never come at the cost of dignity.

📚 References & Further Reading

-

Michigan Auditor General (2016).

Performance Audit of the Unemployment Insurance Agency Fraud Detection System (MiDAS).

Official audit documenting the catastrophic error rates (≈93%) in automated fraud determinations. -

Undark Magazine — Patrick Barry (2019).

The Automated System That Accused Thousands of Unemployment Recipients of Fraud.

In-depth investigative reporting on MiDAS design flaws, auto-adjudication, and human impact.

https://undark.org/2019/03/05/michigan-unemployment-fraud-algorithm/ -

Upturn & Benefits Tech Advocacy Hub (2020).

Disrupted Denials: How Automated Decision-Making Is Unfairly Punishing People Seeking Unemployment Benefits.

Policy and technical analysis of MiDAS and similar systems across U.S. states.

https://www.upturn.org/reports/2020/disrupted-denials/ -

Bauserman v. Unemployment Insurance Agency,

Michigan Court of Appeals (2018).

Landmark case establishing due-process violations caused by MiDAS auto-adjudication. -

Cahoo v. SAS Analytics Inc. & Michigan UIA,

U.S. District Court, Eastern District of Michigan (2018).

Federal case addressing constitutional harms from automated fraud determinations. -

ACLU of Michigan (2015–2019).

Unemployment Fraud and Due Process Advocacy Materials.

Documentation of wage garnishment, tax refund seizures, and lack of hearings. -

Detroit Free Press — Paul Egan & Kristen Jordan Shamus (2017–2019).

Michigan’s Unemployment Fraud System Wrongly Accused Tens of Thousands.

Investigative series detailing personal stories, bankruptcy, and state accountability failures. -

National Employment Law Project (NELP) (2018).

Machine Bias and Unemployment Insurance Systems.

Analysis of how automation magnifies structural inequities in unemployment insurance. -

Michigan Legislature – House Fiscal Agency (2017).

Unemployment Insurance Agency: Fraud Detection and Collections Report.

Fiscal and administrative background on MiDAS penalties and collections. -

U.S. Department of Labor – Employment and Training Administration (2016–2018).

Guidance on Unemployment Insurance integrity, fraud prevention, and due process requirements.

If you notice missing sources or want to suggest additional references, please reach out or contribute — this list will be updated as part of the ongoing AI Fairness 101 series.

📥 AI Fairness 101 — Real-World Incidents: Netherlands Child Welfare Scandal Case Deck (PDF)

👉 Download the Michigan MiDAS Scandal Case Deck (PDF)

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 — Real-World Incidents learning track.

Stay tuned — new posts every week!

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.

When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

How a design-phase failure in the Dutch childcare fraud algorithm created one of the worst AI governance disasters in Europe — and what the Global South must learn from it.