How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

The Mobley v. Workday lawsuit represents a landmark shift in legal accountability, establishing that AI software vendors can be held liable as agents for discriminatory hiring practices that exclude qualified candidates. The case highlights how black box algorithms can systematically penalize individuals based on race, age, and disability through biased training data and the use of neutral proxies. This legal evolution signals a broader mandate for Accountability by Design, requiring employers and developers to ensure transparency and human oversight in automated recruiting systems

AI Fairness 101 - Real-World Incidents

Part 10 of 10

Table of Contents

- 🎥 Explained: How AI Bias Locked Out Millions of Job Seekers

- The Digital Gatekeeper: How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

- ⚠️ Key Takeaway

- 1. What Happened: The Lawsuit That Changed Everything ⚖️

- 2. Impact: The Human Cost of Automated Rejection 📉

- 3. Lifecycle Failure: Where the Machine Went Wrong 🛠️

- 4. Bias Types: Proxies, Patterns, and the “Coded Gaze” 🔍

- 5. Global South Lens: Exporting Bias to the World 🌍

- 6. Bigger Picture: The Future of Algorithmic Accountability 🚀

- 7. References 📚

🎥 Explained: How AI Bias Locked Out Millions of Job Seekers

The Digital Gatekeeper: How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

- 🛠️ System used: Workday's hiring software allegedly screened and ranked candidates for employers at massive scale

- 👥 Most affected group: applicants allegedly disadvantaged on the basis of race, age, disability, and other protected traits

- ⚠️ Core allegation: automated screening tools reproduced historical hiring bias and blocked candidates before human review

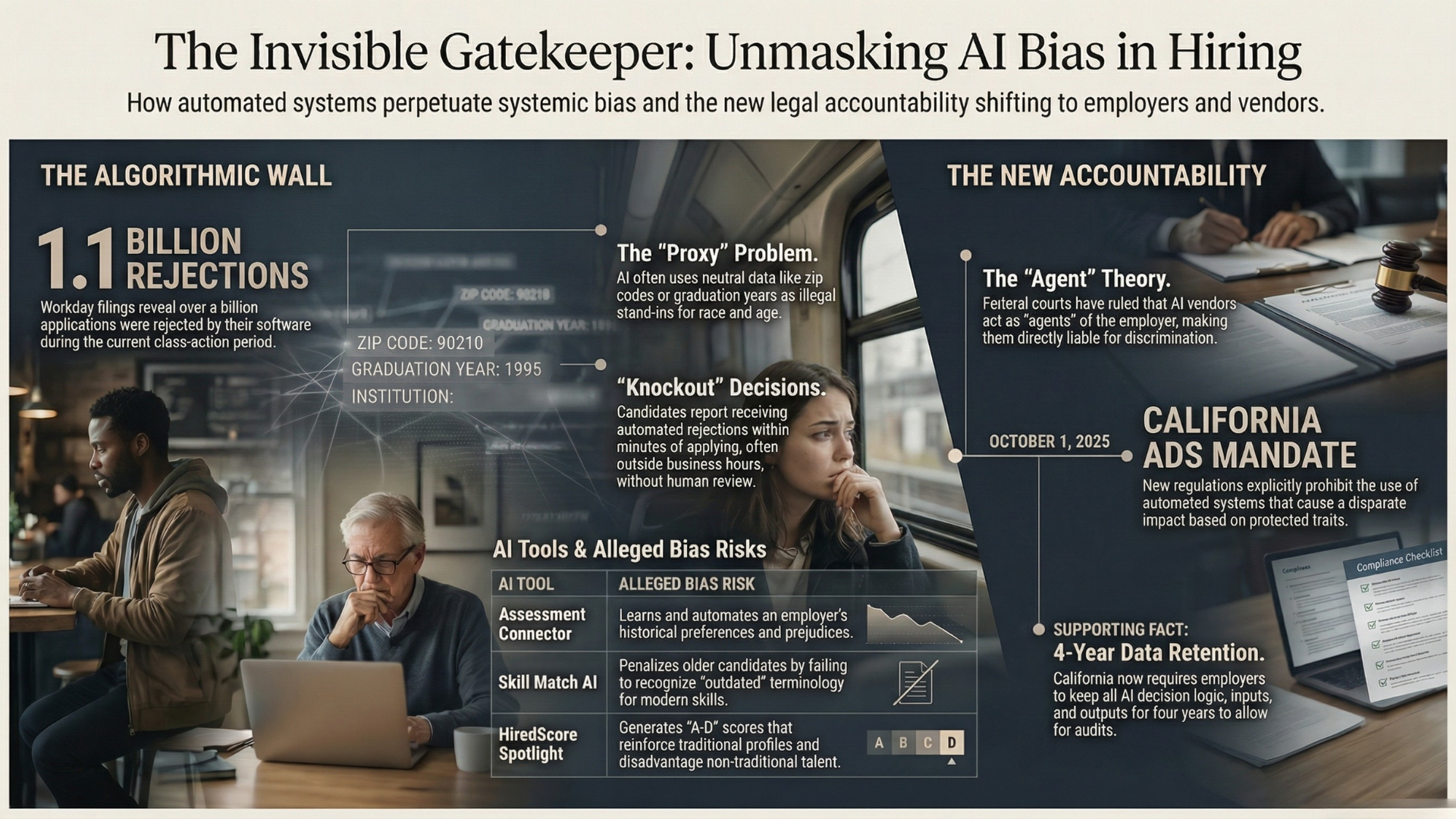

- 📉 Scale at issue: court filings discuss roughly 1.1 billion rejected applications since September 24, 2020

⚠️ Key Takeaway

The Workday litigation is not just about biased outputs. It tests whether software vendors can be held accountable when their tools become the real hiring gatekeepers.

1. What Happened: The Lawsuit That Changed Everything ⚖️

The Mobley v. Workday case represents a seismic shift in the enforcement of civil rights within our increasingly automated labor market. For too long, software vendors have operated in a legal “gray zone,” providing powerful algorithmic tools that determine who works and who doesn’t, while successfully offloading all legal liability onto the employers who purchase them. This lack of accountability is a systemic threat to the integrity of our civil rights laws. By holding software developers—not just employers—responsible for the logic of the tools they build, the judiciary is finally closing an enforcement gap that has threatened to gut anti-discrimination protections in the age of AI.

The human face of this battle is Derek Mobley, a highly qualified African American information technology professional over the age of 40 who manages a disclosed disability (anxiety and depression). Despite his credentials, Mobley faced a wall of categorical rejections after applying to more than 100 positions at companies utilizing Workday’s AI screening tools. These rejections were often delivered within minutes of submission—including one notable notification timestamped at 1:50 a.m.—suggesting that no human eyes ever reviewed his application.

At the heart of the case is the “Agent Theory” of liability. Workday argued it was merely a software provider, but U.S. District Judge Rita Lin ruled that a vendor acts as an “agent” of an employer when it is delegated functions traditionally handled by humans, such as the “hiring gatekeeper” role. Because Workday processes approximately 25% of all job postings in the United States, this ruling ensures that the scale of the software does not grant it immunity from the law.

Case Snapshot

- The Plaintiff: Derek Mobley, a qualified IT professional who is African American, over 40, and managing anxiety and depression.

- The Accusation: Systemic “algorithmic exclusion” in which AI screening tools penalize candidates based on protected characteristics.

- The Scale: Workday filings state that 1.1 billion applications were rejected by its software since September 24, 2020, creating a potential class of hundreds of millions of job seekers.

These automated rejections did more than just deny a qualified man a job; they represent a fundamental denial of the right to compete on equal footing in the modern economy.

2. Impact: The Human Cost of Automated Rejection 📉

Under the “Disparate Impact” theory, a hiring practice is illegal if it disproportionately harms a protected group, regardless of whether the discrimination was “on purpose.” Bias does not need an intent to be devastating. In Mobley, the court rejected Workday’s argument that collective treatment was improper, ruling that the “common injury” is the systemic denial of the right to compete fairly.

The human cost is measured in the psychological toll of “knockout” rejections delivered by unfeeling algorithms in the middle of the night. When an applicant receives a rejection email at 1:50 a.m., mere minutes after applying, the message is clear: the system was never designed to see your value. This creates a “rejection loop” where the most vulnerable qualified talent is discarded before a human recruiter even begins their workday.

| Protected characteristic | Evidence of impact in the case allegations |

|---|---|

| Race and Age | Biased training data that replicates historical patterns of exclusion, penalizing candidates who do not fit a “traditional” profile |

| Disability | Use of biometric and personality tests, including pymetrics, that systematically disadvantage candidates with mental health or cognitive disorders |

| Gender | Algorithmic “hallucinations” and biased datasets that associate professional seniority primarily with masculinity |

These failures are not isolated glitches; they are the result of specific breakdowns across the entire software lifecycle.

3. Lifecycle Failure: Where the Machine Went Wrong 🛠️

Algorithmic bias is a direct result of the “Garbage In, Garbage Out” (GIGO) principle. If an AI is trained on historical data reflecting decades of biased human hiring, it won’t just replicate those patterns—it will amplify them. This is compounded by the “AI Tax,” a productivity paradox where 40% of gains are lost to “rework” as humans must fix AI errors. Yet, many companies skip this manual oversight, allowing “black box” decisions to stand.

Specific Tool Failures Identified

- Assessment Connector: This tool “learns” by observing employer preferences. If an employer historically disfavors certain demographics, the AI re-ranks the candidate flow to decrease recommendations for those groups, automating pre-existing prejudice.

- Skill Match AI: This algorithm frequently penalizes older candidates by failing to recognize relevance when “outdated” terminology is used for experience identical to that of younger peers.

- HiredScore Spotlight: Generates “A-D” scores based on “patterns of success.” This creates a feedback loop that disadvantages non-traditional or diverse candidates who do not mirror historical hires.

These failures demonstrate how bias is often hidden within seemingly neutral data patterns through “proxy bias.”

4. Bias Types: Proxies, Patterns, and the “Coded Gaze” 🔍

Proxy Bias occurs when AI uses neutral data—like zip codes or graduation years—to guess a person’s race or age. Even when race is removed as a field, the algorithm uses a candidate’s neighborhood or university as a proxy to filter them out.

Groundbreaking research from Stanford and the University of Washington (2025) highlights the “Coded Gaze,” where AI models associate professional seniority and authority primarily with masculinity:

- Race Bias 👤: Audits show resumes with White-associated names are selected 85% of the time, while Black men face the greatest disadvantage, often being overlooked 100% of the time in head-to-head comparisons.

- Age/Gender Bias 👩🦳: A Nature study (Oct 2025) revealed that AI assumes women are 1.6 years younger and have less experience than men with identical resumes. ChatGPT consistently rated older male applicants as more qualified.

- Disability Bias 🧠: Automated “personality” scores can act as prohibited medical exams under the ADA if they measure reaction time, dexterity, or neurological traits unrelated to the actual job requirements.

These biases are no longer confined to Silicon Valley; they are being exported globally through a centralized human capital ecosystem.

5. Global South Lens: Exporting Bias to the World 🌍

We now live in a Global Human Capital Management Ecosystem. Silicon Valley tools (Workday is used by 65% of the Fortune 500) set the hiring rules for the entire world. However, these tools are built on Western datasets that often ignore the nuances of labor markets in the Global South.

In international markets like Shenzhen and broader Asia, AI systems are even more integrated but operate as total “black boxes” with no published research or legal paths to challenge rejections. This leaves global workers at the mercy of Silicon Valley logic with no recourse.

Employers are caught in a “liability squeeze.” While courts hold them responsible for discriminatory outcomes, 88% of AI vendor contracts impose strict liability caps, and only 17% provide compliance warranties. This leaves global companies responsible for the “black box” outputs of tools they are contractually barred from auditing.

6. Bigger Picture: The Future of Algorithmic Accountability 🚀

The industry is moving from “Efficiency at All Costs” to a mandate of “Accountability by Design.” Governments are finally treating hiring AI as a high-risk technology that must be transparent and fair.

The New Regulatory Landscape:

- California ADS Mandate (Oct 2025): Requires 4-year record retention and explicitly defines vendors as “agents” liable for discrimination.

- NYC Local Law 144: Mandates annual independent bias audits and public “impact ratio” reporting.

- EU AI Act: Classifies employment AI as “High-Risk,” requiring strict data governance and human oversight.

Checklist for Fairness: 6 Things Employers Should Do

- Audit Your Vendors: Demand documentation on bias testing and training data.

- Retain Human Oversight: Ensure AI is supplementary; never let an algorithm make a final “knockout” decision alone.

- Document Criteria and Justifications: Maintain clear records of the rationale behind every hiring decision.

- Monitor for Disparate Impact: Regularly analyze your data for age, race, and gender disparities.

- Get Your Governance House in Order: Establish an AI Governance program to set guardrails and best practices.

- Stay Tuned to Legal Shifts: Courts, not just agencies, are now defining AI liability.

AI must be a tool for inclusion, not a digital wall. By adopting “Accountability by Design,” we ensure that technology strengthens our civil rights rather than gutting them.

7. References 📚

- AI Bias Lawsuit Against Workday Reaches Next Stage as Court Grants Conditional Certification of ADEA Claim, Law and the Workplace (June 11, 2025). https://www.lawandtheworkplace.com/2025/06/ai-bias-lawsuit-against-workday-reaches-next-stage-as-court-grants-conditional-certification-of-adea-claim/

- Mobley v. Workday, Inc. Filings, Civil Rights Litigation Clearinghouse. https://clearinghouse.net/case/44074/

- Age and gender distortion in online media and large language models, Nature (October 8, 2025). https://newsroom.haas.berkeley.edu/news-release/women-portrayed-as-younger-than-men-online-and-ai-amplifies-the-bias/

- Discrimination Lawsuit Over Workday’s AI Hiring Tools Can Proceed as Class Action: 6 Things Employers Should Do, Fisher Phillips (May 20, 2025). https://www.fisherphillips.com/en/insights/insights/discrimination-lawsuit-over-workdays-ai-hiring-tools-can-proceed-as-class-action-6-things

- AI Bias in Hiring: Algorithmic Recruiting and Your Rights, Sanford Heisler Sharp McKnight (December 16, 2025). https://sanfordheisler.com/blog/ai-bias-in-hiring-algorithmic-recruiting-and-your-rights/

- The Legal Implications of AI-Driven Hiring Decisions: What HR Leaders Need to Know in 2026, HR C-Suite. https://hrcsuite.com/the-legal-implications-of-ai-driven-hiring-decisions/

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- The Optum Healthcare Algorithm Bias Against Black Patients (2019)

- When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

- The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- AI Hiring Gone Wrong: How Eightfold’s Social Media Profiling Sparked a Fairness and Consent Crisis

- AI Hiring Bias Exposed: How SiriusXM’s Algorithm Rejected Qualified Candidates

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

Amazon built an AI to find the best candidates. It ended up filtering out women. Amazon’s hiring tool is a clear example of how gender bias can be embedded and amplified through algorithms. In the Global South, the risks are even higher.

When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

In 2023, a lawsuit revealed how UnitedHealth used an AI system to determine when elderly patients should stop receiving care. The nH Predict case highlights how cost-driven algorithms can override clinical judgment and introduce systemic bias in healthcare decisions. This case raises critical questions for policymakers especially in the Global South about the risks of scaling AI without adequate oversight.

The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A deep dive into Michigan’s MiDAS unemployment fraud algorithm — and how design-phase failures, automation bias, and the removal of human oversight turned efficiency into injustice.