AI’s Green Promises: A Deceptive Mirage of Sustainability

Why efficiency-focused 'Green AI' claims often hide deeper environmental, social, and governance costs—especially in the Global South.

AI Sustainability 101

Part 1 of 1

Table of Contents

- AI’s Green Promises: A Deceptive Mirage of Sustainability

- 🎥 Explained: AI’s Green Promises: Why “Sustainable AI” Is Often a Mirage

- Abstract

- 1. Introduction

- 2. The Partial Picture: Deconstructing “Green AI” Metrics

- 3. A Holistic Lens: The ESG Framework for AI Sustainability

- 4. Amplified Challenges in the Global South

- 5. Enforceable Sustainability: Moving from Pledges to Proof

- 6. Collaboration and Shared Responsibility

- 7. Conclusion: Towards Verifiable Sustainability and Accountability

- <img src="/assets/images/AISustainability101_2025_GreenAI-mirage-of-sustainability_infographic_v1.png" alt="AI sustainability claims exposed" style="width:100%; border-radius:10px; margin: 1rem 0;" />

- 📥 AI Sustainbility 101 — AI Sustainability Explainer Deck (PDF)

- 💬 Join the Conversation

- 🌍 Follow GlobalSouth.AI

- Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

AI’s Green Promises: A Deceptive Mirage of Sustainability

“AI’s ‘Green’ promises are often a mirage:

If a company cannot publish its true, independently audited lifecycle impact to ESG, should it even be permitted to scale its operations?”

🎥 Explained: AI’s Green Promises: Why “Sustainable AI” Is Often a Mirage

Abstract

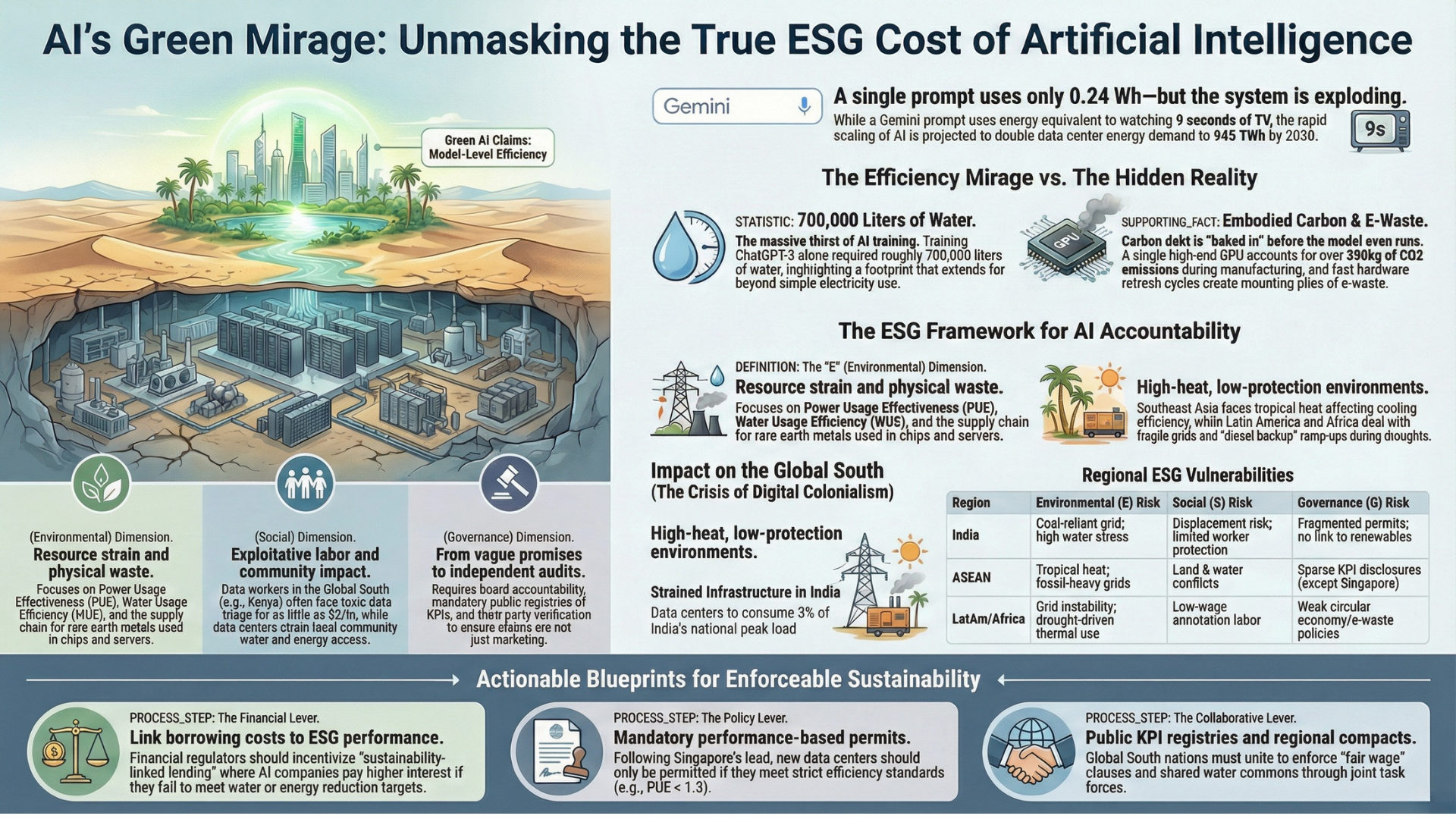

This article critically analyses so-called “green AI” claims, arguing that they often mask significant environmental, social, and governance (ESG) costs. A comprehensive perspective reveals unaddressed effects such as high training energy, massive water consumption, embodied carbon, and growing e-waste—costs that are amplified by the exponential scaling of AI, despite improvements in individual model efficiency.

Viewed through an ESG lens, these hidden costs span:

- Environmental: resource strain and waste

- Social: exploitative labour and community impact

- Governance: weak accountability and disclosure

These issues are particularly magnified in the Global South, raising the risk of digital colonialism. The article proposes concrete policy interventions—including ESG-linked underwriting, sustainability-linked lending, performance-based permits, mandatory disclosures, and independent assurance—to move from vague sustainability promises to verifiable accountability.

1. Introduction

Artificial Intelligence (AI) is growing rapidly, with frequent announcements of innovations framed as environmentally friendly and sustainable. However, closer scrutiny reveals that many “green AI” claims do not reflect the full reality.

Like a mirage, these claims obscure the true environmental, social, and governance (ESG) costs of deploying and scaling AI technologies. This article offers a critical lens to evaluate AI sustainability claims, examine their real costs, and propose policy mechanisms to transition from ambiguous commitments to demonstrable sustainability.

2. The Partial Picture: Deconstructing “Green AI” Metrics

Green AI narratives often rely on narrow efficiency metrics that capture only a fraction of AI’s true footprint.

For example, Google has publicly stated that its Gemini model consumes only 0.24 Wh per text prompt, roughly equivalent to nine seconds of watching television, contributing to a reported 44-fold reduction in carbon footprint within a year. While such efficiency gains are relevant, they omit major contributors to AI’s lifecycle impact.

Key exclusions include:

- Training energy, which is rarely disclosed

- Water usage, not only for cooling data centres but also indirectly through electricity generation

- Embodied carbon, arising from the manufacture of chips, GPUs, servers, racks, steel, and concrete—costs incurred before any model is deployed

- E-waste, driven by rapid hardware refresh cycles

For instance, training ChatGPT-3 alone is estimated to have required 700,000 litres of water, and the embodied carbon of a single high-end GPU can exceed 300 kg of CO₂**.

Despite improvements in model-level efficiency, explosive scaling negates these gains. The effect is analogous to designing a fuel-efficient car and then producing it a thousand times more—overall emissions still rise. Global energy and water demand linked to AI infrastructure is projected to double by 2030, making efficiency alone an insufficient sustainability metric.

3. A Holistic Lens: The ESG Framework for AI Sustainability

A meaningful assessment of AI sustainability requires a holistic ESG perspective.

Environmental (E)

AI data centres demand uninterrupted electricity, placing sustained pressure on power grids. Water footprints are both direct (cooling) and indirect (electricity generation), with heightened impact in water-stressed regions such as India.

Additionally, AI infrastructure carries significant carbon debt from hardware manufacturing, compounded by accelerated refresh cycles and limited recycling capacity—especially in parts of the Global South where circular economy systems remain weak.

Social (S)

AI systems depend on extensive annotation and moderation pipelines, often staffed by low-wage workers in the Global South. Reports of Kenyan data workers earning as little as USD 2 per hour and being exposed to toxic content raise concerns around fair pay, mental health, and labour protections.

Beyond labour, large data centres affect surrounding communities through land use, water extraction, noise, and pollution, directly influencing local health and ecosystems.

Governance (G)

Governance determines whether sustainability claims are credible or performative. The European Union has set early precedents by mandating disclosure of standardised data-centre KPIs, including:

- Energy consumption

- Power Usage Effectiveness (PUE)

- Share of renewable energy

Such transparency builds public trust. In contrast, many Global South contexts lack consistent disclosure regimes, independent audits, and enforceable ESG reporting norms.

4. Amplified Challenges in the Global South

The Global South experiences disproportionate sustainability risks from AI expansion due to structural realities.

In India, peak electricity demand reached 250 GW in summer 2024 and is projected to exceed 400 GW by 2030. Data-centre capacity is expected to grow from ~1.4 GW to ~9 GW, consuming nearly 3% of national peak electricity, up from under 1%.

Similar patterns appear across Southeast Asia, where fossil-fuel-heavy grids dominate. Without targeted decarbonisation policies, AI growth directly translates into higher emissions.

Water stress compounds the challenge. AI infrastructure competes with agriculture and domestic use, intensifying basin-level conflicts. Meanwhile, the Global South supplies much of the world’s AI labour and critical minerals yet bears the environmental and social costs—fueling concerns of digital colonialism.

5. Enforceable Sustainability: Moving from Pledges to Proof

Voluntary sustainability pledges are insufficient. Enforceable mechanisms are required across finance, regulation, and labour.

The Financial Lever

- Insurance regulators should enforce ESG-linked underwriting standards, as seen in practices adopted by Zurich Insurance, enabling insurers to deny coverage or impose premiums on unsustainable AI operations.

- Central banks and financial regulators should promote sustainability-linked lending and bonds, tying borrowing costs to measurable energy, water, and social performance targets.

Policy and Regulation Lever

- Planning ministries should mandate performance-based permits for new AI data centres, drawing lessons from Singapore’s PUE < 1.3 standards.

- Energy and data regulators should require public disclosure of standardised ESG metrics, aligned with EU data-centre KPI regimes.

- Securities regulators, including those implementing India’s SEBI Business Responsibility and Sustainability Reporting (BRSR) framework, should mandate independent ESG assurance.

- Labour ministries should enforce minimum standards for data work, including living wages, safety protections, grievance redress, and formal contracts.

6. Collaboration and Shared Responsibility

Effective enforcement requires coordinated action:

- Policymakers must define mandatory rules and disclosures

- Technologists must embed energy, water, and carbon tracking into AI systems

- Researchers must provide independent verification

Countries in the Global South should collaborate through regional compacts to ensure clean power access, shared water governance, and fair labour standards. Public registries, lifecycle KPIs, and annual third-party audits are essential to track real progress.

7. Conclusion: Towards Verifiable Sustainability and Accountability

A critical ESG examination reveals that many “green AI” claims are illusory. Breaking this mirage requires demanding transparent, independently verified lifecycle metrics from all AI operators.

The core question remains:

If a company cannot publish its true, independently audited lifecycle impact to ESG, should it be allowed to scale?

📥 AI Sustainbility 101 — AI Sustainability Explainer Deck (PDF)

👉 Download the AI Sustainability Explainer Deck (PDF)

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

When Good Intentions Go Global: Why the EU AI Act Doesn’t Fit the Global South

Why the EU AI Act—designed for data-rich, institutionally mature European economies—breaks down when applied to the Global South.

Beyond America's AI Action Plan: A Global South Response on Fairness

America's AI Action Plan's redefinition of fairness, by removing Diversity, Equity, and Inclusivity (DEI), risks hard-coding inequities for the Global South, necessitating a proactive response to define its own culturally and contextually relevant AI fairness standards.

Mind the Gap: Why the NIST AI Risk Framework Breaks Down in the Global South

The NIST AI Risk Management Framework (AI RMF) is increasingly treated as a global blueprint for “trustworthy AI.” But what happens when a framework designed for resource-rich Western institutions is applied to the Global South?