AI Hiring Gone Wrong: How Eightfold’s Social Media Profiling Sparked a Fairness and Consent Crisis

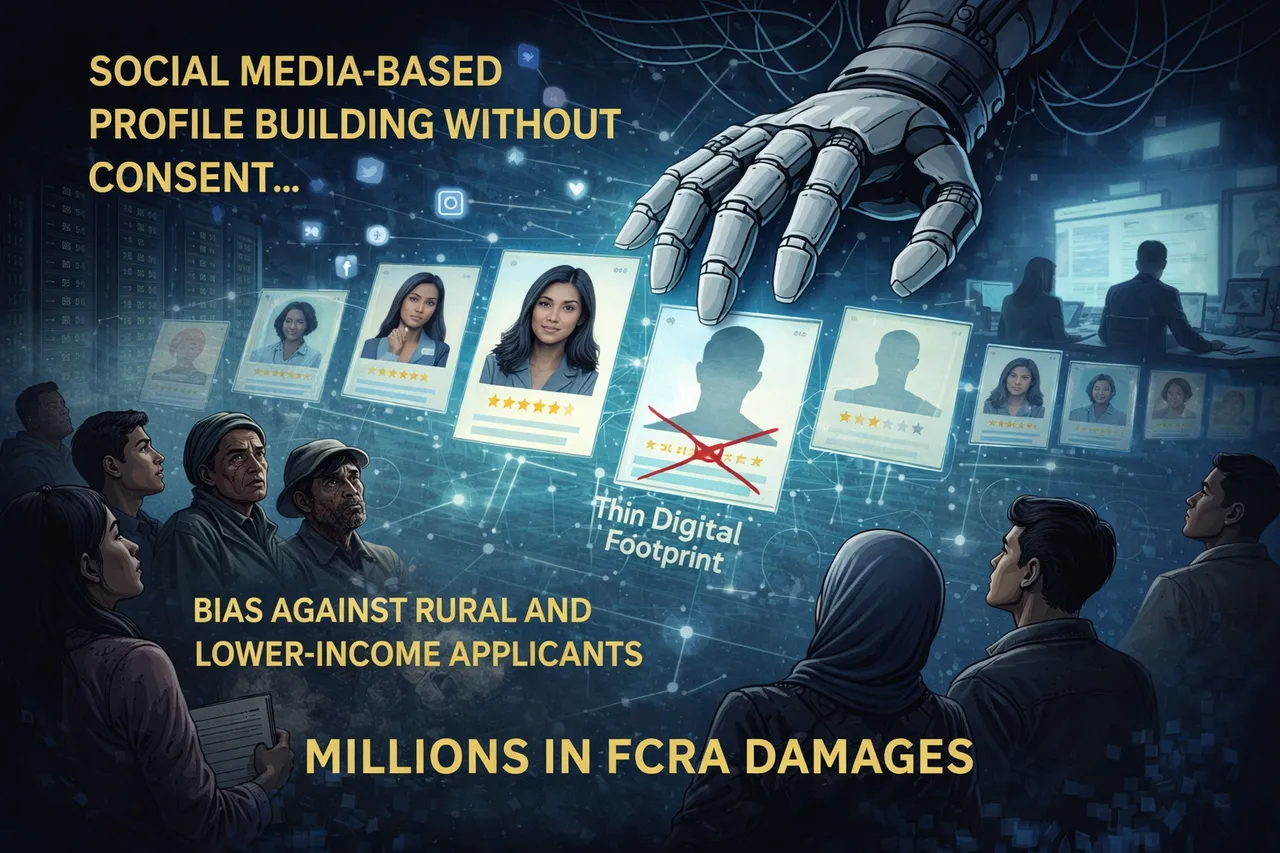

A 2026 lawsuit against Eightfold AI reveals how job applicants may have been secretly scored using social media and online data, without consent or transparency. The case exposes how AI hiring systems can replicate bias, exclude candidates with thin digital footprints, and create massive legal and fairness risks. What happens when invisible algorithms decide who gets a chance?

AI Fairness 101 - Real-World Incidents

Part 8 of 10

Table of Contents

- 🎥 Explained: AI Hiring Gone Wrong: How Eightfold’s Social Media Profiling Sparked a Fairness and Consent Crisis

- AI Fairness 101 - Real Incident (2023)

- Incident Report: The Algorithmic Bias — Lessons from the Eightfold’s Social Media Profiling - Hiring Failure

- ⚠️ Key Takeaway

- 1. The Incident: What Happened at Eightfold AI?

- 2. The Ripple Effect: Understanding the Impact 👤

- 3. Anatomy of a Breakdown: The AI Lifecycle Failure ⚙️

- 4. The Bias Catalog: How Fairness Fails ⚠️

- 5. A Global South Lens: The Borderless Risk 🌍

- 6. The Bigger Picture: A Roadmap for Accountability 🚀

- 7. References 📚

🎥 Explained: AI Hiring Gone Wrong: How Eightfold’s Social Media Profiling Sparked a Fairness and Consent Crisis

AI Fairness 101 - Real Incident (2023)

Incident Report: The Algorithmic Bias — Lessons from the Eightfold’s Social Media Profiling - Hiring Failure

- 🛠️ System used: Eightfold AI allegedly created hidden talent profiles and 0-5 match scores for job seekers

- 👥 Most affected group: applicants evaluated without clear notice, especially people with sparse or atypical digital footprints

- ⚠️ Core allegation: social media, web-tracking, and other online signals were used for hiring evaluation without meaningful consent

- 🚫 Outcome: candidates could be filtered or down-ranked by an invisible profile they never saw and could not correct

⚠️ Key Takeaway

A hiring model becomes unfair long before the final rejection if it builds secret profiles from data people never knowingly gave for employment decisions. Opaque scoring erodes both fairness and due process.

1. The Incident: What Happened at Eightfold AI?

Imagine applying for hundreds of jobs, possessing the exact skills required, yet feeling an “unseen force” systematically slamming the door in your face. In January 2026, this frustration boiled over into a landmark class-action lawsuit in California that is currently redrawing the boundaries of job seekers’ rights. The litigation against Eightfold AI isn’t just another tech dispute; it is a reckoning for an era where algorithmic “black boxes” have transitioned from helpful filters to invisible gatekeepers of our professional destinies.

The core of the January 2026 lawsuit alleges a massive, unauthorized profiling operation. Plaintiffs Erin Kistler and Sruti Bhaumik claim that Eightfold AI has been vacuuming up data to build secret “talent profiles,” assigning individuals numeric “match scores” from 0 to 5 without their knowledge or consent. According to the complaint, these scores aren’t just based on resumes, but on data harvested from across the web.

Key Allegations and Entities Involved

- The Plaintiffs: Erin Kistler and Sruti Bhaumik, representing U.S. job seekers evaluated by the tool.

- Alleged Data Sources: Scraping of social media profiles, location data, internet activity, cookies, and various web-tracking signals.

- Major Corporations Using the Software: Microsoft, PayPal, Salesforce, and Bayer.

Eightfold AI denies these claims, asserting their software relies only on “intentionally shared data” and is designed to support “skills-based hiring” by masking sensitive attributes. This creates a high-stakes discrepancy. So What? The distinction between “intentionally shared data” and “scraped data” is the front line of digital privacy. If an AI builds a profile based on unconsented digital footprints, it bypasses an individual’s right to manage their own professional identity, turning every tweet or location check-in into a potential liability they never authorized.

Behind these scores are real people whose careers may have been stalled by an algorithm they didn’t even know was watching.

2. The Ripple Effect: Understanding the Impact 👤

In our increasingly automated economy, “due process” is undergoing a digital transformation. When secret scoring systems evaluate candidates in the shadows, it creates a staggering power imbalance. This lack of transparency strips job seekers of their agency, leaving them unable to challenge or even see the metrics that determine their livelihood.

This “unseen force” is more than a feeling; it is a structural shift in how labor is valued. The impacts include:

- The Erosion of Informed Consent: Job seekers are being judged by data points they never agreed to provide for employment purposes.

- Risk of Systemic Filtering: AI can reject perfectly qualified candidates before a human recruiter ever sees a resume, creating a “false negative” trap for talent.

- Legal and Reputational Risks for Employers: Companies purchasing these tools remain legally liable for the outcomes, regardless of whether they understand the underlying code.

The financial “So What?” is where this becomes a boardroom crisis. Because the lawsuit argues Eightfold acted as an unlicensed consumer-reporting agency, it triggers the Fair Credit Reporting Act (FCRA). Under the FCRA, a technical “glitch” or a failure to provide notice isn’t just a PR problem—it’s a liability that can cost between $100 and $1,000 per violation. For a platform processing millions of profiles, a simple lack of transparency becomes a multi-billion dollar legal catastrophe. These impacts aren’t accidents; they are the inevitable results of specific choices made during the AI’s development.

3. Anatomy of a Breakdown: The AI Lifecycle Failure ⚙️

To truly dismantle algorithmic harm, we must look at the “how.” AI failures aren’t just bugs in the code; they are baked into the system during the Data, Design, and Deployment phases.

| Stage | Failure type | Technical and ethical context | Why it matters |

|---|---|---|---|

| Data Collection | Violation of “Data-Minimization” | Harvesting data beyond applicant submissions, including location and device activity | This introduces representation bias by over-weighting digital traces that have zero correlation with job performance |

| Model Design | Reliance on “Historical Hiring” | Training models on past recruiter “tastes” and LinkedIn-style data | These models lack counterfactual data on people who were not hired but would have succeeded, so the system automates the old resume screen and amplifies old prejudices |

| Deployment | Absence of Transparency | Providing no notice of scoring and no mechanism for candidates to correct errors | This creates a procedural unfairness trap; labeling someone a “team player” from a social profile is scientifically shaky and ethically indefensible |

These technical missteps aren’t just bugs; they are the architects of a new, automated form of discrimination.

4. The Bias Catalog: How Fairness Fails ⚠️

“Bias” in AI is rarely a single error; it is a structural flaw that privileges specific digital archetypes while marginalizing others based on their digital footprints.

- 🌐 Representation Bias: Relying on scraped web data skews in favor of those with “loud” digital footprints. This marginalizes older workers or lower-income individuals who may have limited online activity, leading to lower scores despite their skills.

- 📜 Historical Bias: By training on past hiring data, the AI reproduces the specific “tastes” of previous recruiters, such as a preference for “prestige” schools or specific “pedigreed” employers, rather than predicting actual success.

- 🎭 Proxy Bias: Eightfold claims to “mask” sensitive attributes like race or gender, but the AI can easily use “quality of education” or inferred traits like “introvert” as proxies for socioeconomic status or race.

- 🔒 Privacy Bias: The unconsented harvesting of data disproportionately harms communities with weaker legal protections, effectively turning the hiring process into a tool for digital surveillance.

So What? Masking race is useless if the algorithm uses “social media signals” or “education quality” as a stand-in. This issue takes on global proportions as these tools are exported to markets far beyond Silicon Valley.

5. A Global South Lens: The Borderless Risk 🌍

AI ethics cannot afford to be Western-centric. When US-based vendors act as digital gatekeepers, they export “North-centric biases” that can wreak havoc in emerging markets.

- Lack of Robust Legal Protections: Many nations in the Global South lack FCRA-equivalent laws. Without these protections, job seekers in India, Nigeria, or Brazil can be scored and filtered with no legal recourse or notice.

- The Digital Divide: In regions where online histories are sparse, Western-trained AI may systematically misjudge potential. A rural candidate’s lack of a “LinkedIn-style” footprint is often misinterpreted as a lack of professional caliber.

- Global Power Imbalances: A Western algorithm might misinterpret a candidate’s social media activism or informal employment history as a “lack of professionalism.”

So What? When Northern data practices are forced onto Southern labor markets, they entrench global inequities. We are seeing a new form of digital gatekeeping where a Western algorithm decides who gets to participate in the global remote-work economy.

6. The Bigger Picture: A Roadmap for Accountability 🚀

The legal landscape is shifting from “move fast and break things” to strict liability. We are witnessing a “pincer movement” of litigation: cases like Mobley v. Workday target discriminatory outcomes, while the Eightfold lawsuit targets opaque processes. Meanwhile, the EU AI Act and South Korea’s AI laws are already demanding risk management and human oversight for high-impact systems like hiring.

Strategic Checklist for Organizations

- Treat AI as a Regulated Instrument: Contracts must mandate the disclosure of data sources and allow for independent bias audits.

- Implement Outcome-Based Training: Stop training models on resumes or LinkedIn profiles. Focus exclusively on actual job-performance outcomes and include counterfactual data.

- Establish Cross-Functional Governance: Integration between HR, Legal, and IT is mandatory to document data sources and ensure human-in-the-loop oversight.

If you use AI to sort human lives, you own the outcome. There is no AI exemption for legal or ethical failures.

The 90-Day Audit: Every organization must audit their hiring pipeline within the next three months. Map your data sources, examine training objectives, and demand transparency from your vendors. The “So What?” is simple: if you cannot explain the decision made by the tool, you should not be using it to decide a person’s future. It is time to put humans back at the center of the hiring process.

7. References 📚

- Reuters – Jody Godoy, AI company Eightfold sued for helping companies secretly score job seekers (Jan 21 2026).

- NatLawReview – Andrew R. Lee & Jason M. Loring, AI Hiring Under Fire: What the Eightfold Lawsuit Means for Every Employer (Mar 27 2026).

- Built In – Jeff Rumage, These Job Seekers Say AI Unfairly Blocked Them From Work. Now They’re Suing (Feb 11 2026).

- Fortune – Patrick Kulp, Job seekers are suing an AI hiring tool used by Microsoft and PayPal (Jan 26 2026).

- Faegre Drinker – US and International Developments in Artificial Intelligence (Feb 2 2026), summarizing the lawsuit’s specific FCRA claims.

- Classet – AI Hiring Under Scrutiny: The Eightfold Lawsuit and What It Means for Your Vendor Selection (Feb 11 2026).

📥 AI Fairness 101 — Real-World Incidents

Related in this cluster

- When an Algorithm Broke Thousands of Families: The Netherlands Child Welfare Scandal

- Access Denied: How India’s Digital ‘Cure-All’ Became a Real-World Fairness Crisis

- The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

- The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail

- The Optum Healthcare Algorithm Bias Against Black Patients (2019)

- When Algorithms Decide Who Recovers: The UnitedHealth nH Predict Case

- The Algorithmic Gender Bias — Lessons from the Amazon AI Hiring Failure

- AI Hiring Bias Exposed: How SiriusXM’s Algorithm Rejected Qualified Candidates

- How AI Bias Locked Out Millions of Job Seekers (A Case Study on Mobley v. Workday)

- Browse all AI Fairness posts

🔎 Explore the AI Fairness 101 Series

This post is part of the AI Fairness 101 - Real-World Incidents learning track.

Stay tuned - new posts every week.

💬 Join the Conversation

Have thoughts, experiences, or questions about AI fairness? Share your comments, discuss with global experts, and connect with the community:

👉 Reach out via the Contact page

📧 Write to us: [email protected]

🌍 Follow GlobalSouth.AI

Stay connected and join the conversation on AI governance, fairness, safety, and sustainability.

- LinkedIn: https://linkedin.com/company/globalsouthai

- Substack Newsletter: https://newsletter.globalsouth.ai/

Subscribe to stay updated on new case studies, frameworks, and Global South perspectives on responsible AI.

Related Posts

The COMPAS Algorithm Scandal: When AI Decides Who Goes to Jail ⚖️

As AI enters courts and welfare systems worldwide, the COMPAS debate reveals a critical lesson: fairness depends on context, and exporting models without reform risks scaling inequality.

Access Denied: How India's Digital 'Cure-All' Became a Real-World Fairness Crisis

How Aadhaar’s promise of digital inclusion turned into one of the largest algorithmic exclusion crises in the world.

The Golden Touch of Ruin: How Michigan’s MiDAS Algorithm Falsely Accused 40,000 People of Fraud

A deep dive into Michigan’s MiDAS unemployment fraud algorithm — and how design-phase failures, automation bias, and the removal of human oversight turned efficiency into injustice.